定义VGG块:

layers.append():将一个元素追加到列表的末尾

import torch

from torch import nn

from d2l import torch as d2ldef vgg_block(num_convs, in_channels, out_channels):layers = [] #用于存储该模块中所有的层for _ in range(num_convs): #循环num_conv次layers.append(nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1)layers.append(nn.ReLU())# 在layer列表末尾中添加in_channels = out_channelslayers.append(nn.MaxPool2d(kernel_size=2, stride=2))return nn.Sequential(*layers) #将列表 layers 中的所有元素依次传递给 nn.Sequential 的构造函数

定义网络模型:

conv_arch=((1, 64),(1, 128),(2, 256),(2, 512),(2, 512))def vgg(conv_arch):conv_blks = []in_channels = 1for (num_convs, out_channels) in conv_arch:conv_blks.append(vgg_block(num_convs, in_channels, out_channels))in_channels = out_channelsreturn nn.Sequential(*conv_blk, nn.Flatten(),nn.Linear(out_channels * 7 * 7, 4096), nn.ReLU(),nn.Dropout(0.5), nn.Linear(4096, 4096), nn.ReLU(),nn.Dropout(0.5), nn.Linear(4096, 10))net = vgg(conv_arch)查看每层的图片尺寸:

X = torch.randn(size = (1, 1, 224, 224))

for blk in net:X = blk(X)print(blk.__class__.__name__,'output shape:\t', X.shape)缩小VGG尺寸,定义一个较小的模型:

ratio = 4

small_conv_arch = [(pair[0], pair[1] // ratio) for pair in conv_arch]

net = vgg(small_conv_arch)进行训练:

lr, num_epochs, batch_size = 0.05, 10, 128

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, reshape=224)

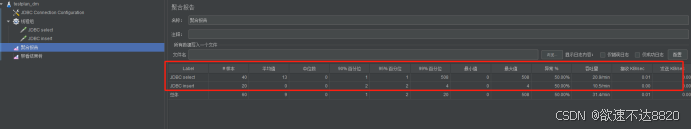

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

![[ 应急响应进阶篇-1 ] Windows 创建后门并进行应急处置-5:启动项后门](https://i-blog.csdnimg.cn/direct/680847ec0d324867b78c5d60dc53d021.png)

![STL-stack栈:P1981 [NOIP2013 普及组] 表达式求值](https://i-blog.csdnimg.cn/direct/eb250b6737ef49b58bdae9378203c49c.png)