- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

文章目录

- 一、前期工作

- 1、ResNet-50总体结构

- 2、设置GPU

- 3、导入数据

- 二、数据预处理

- 1、加载数据

- 2、可视化数据

- 3、再次检查数据

- 4、配置数据集

- 三、构建ResNet-50模型

- 四、编译

- 五、训练模型

- 六、模型评估

- 七、预测

- 八、总结

电脑环境:

语言环境:Python 3.8.0

编译器:Jupyter Notebook

深度学习环境:tensorflow 2.17.0

一、前期工作

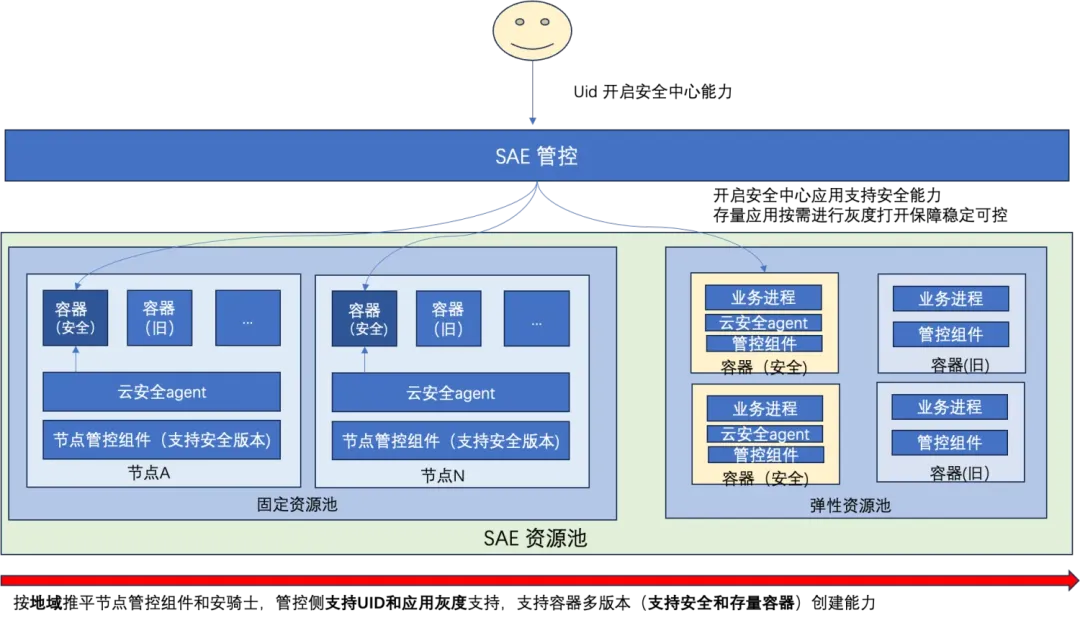

1、ResNet-50总体结构

2、设置GPU

import tensorflow as tfgpus = tf.config.list_physical_devices('GPU')if gpus:tf.config.experimental.set_memory_growth(gpus[0], True)tf.config.set_visible_devices(gpus[0], 'GPU')

3、导入数据

import matplotlib.pyplot as pltplt.rcParams['font.sans-serif'] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = Falseimport os, PIL, pathlib

import numpy as npfrom tensorflow import keras

from keras import layers, modelsdata_dir = './bird_photos'

data_dir = pathlib.Path(data_dir)

image_count = len(list(data_dir.glob('*/*')))

image_count

565

二、数据预处理

1、加载数据

batch_size = 8

img_height = 224

img_width = 224train_ds = tf.keras.preprocessing.image_dataset_from_directory(data_dir,validation_split=0.2,subset='training',seed=123,image_size=(img_height, img_width),batch_size=batch_size)val_ds = tf.keras.preprocessing.image_dataset_from_directory(data_dir,validation_split=0.2,subset='validation',seed=123,image_size=(img_height, img_width),batch_size=batch_size)

我们可以通过class_names输出数据集的标签,按字母顺序对应于目录名称。

class_names = train_ds.class_names

class_names

[‘Bananaquit’, ‘Black Skimmer’, ‘Black Throated Bushtiti’, ‘Cockatoo’]

2、可视化数据

plt.figure(figsize=(10, 4))for images, labels in train_ds.take(1):for i in range(8):ax = plt.subplot(2, 4, i+1)plt.imshow(images[i].numpy().astype('uint8'))plt.title(class_names[labels[i]])plt.axis('off')

plt.imshow(images[0].numpy().astype('uint8'))

3、再次检查数据

for image_batch, labels_batch in train_ds:print(image_batch.shape)print(labels_batch.shape)break

(8, 224, 224, 3)

(8,)

4、配置数据集

AUTOTUNE = tf.data.experimental.AUTOTUNEtrain_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

三、构建ResNet-50模型

from keras import layersfrom keras.layers import Input, Activation, BatchNormalization, Flatten

from keras.layers import Dense, Conv2D, MaxPooling2D, ZeroPadding2D, AveragePooling2D

from keras.models import Modeldef identity_block(input_tensor, kernel_size, filters, stage, block):filters1, filters2, filters3 = filtersname_base = str(stage) + block + '_identity_block_'x = Conv2D(filters1, (1, 1), name=name_base + 'conv1')(input_tensor)x = BatchNormalization(name=name_base + 'bn1')(x)x = Activation('relu', name=name_base + 'relu1')(x)x = Conv2D(filters2, kernel_size, padding='same', name=name_base + 'conv2')(x)x = BatchNormalization(name=name_base + 'bn2')(x)x = Activation('relu', name=name_base + 'relu2')(x)x = Conv2D(filters3, (1, 1), name=name_base + 'conv3')(x)x = BatchNormalization(name=name_base + 'bn3')(x)x = layers.add([x, input_tensor], name=name_base + 'add')x = Activation('relu', name=name_base + 'relu3')(x)return xdef conv_block(input_tensor, kernel_size, filters, stage, block, strides=(2, 2)):filters1, filters2, filters3 = filtersres_name_base = str(stage) + block + '_conv_block_res_'name_base = str(stage) + block + '_conv_block_'x = Conv2D(filters1, (1, 1), strides=strides, name=name_base + 'conv1')(input_tensor)x = BatchNormalization(name=name_base + 'bn1')(x)x = Activation('relu', name=name_base + 'relu1')(x)x = Conv2D(filters2, kernel_size, padding='same', name=name_base + 'conv2')(x)x = BatchNormalization(name=name_base + 'bn2')(x)x = Activation('relu', name=name_base + 'relu2')(x)x = Conv2D(filters3, (1, 1), name=name_base + 'conv3')(x)x = BatchNormalization(name=name_base + 'bn3')(x)shortcut = Conv2D(filters3, (1, 1), strides=strides, name=res_name_base + 'conv')(input_tensor)shortcut = BatchNormalization(name=res_name_base + 'bn')(shortcut)x = layers.add([x, shortcut], name=name_base + 'add')x = Activation('relu', name=name_base + 'relu3')(x)return xdef ResNet50(input_shape=(224, 224, 3), classes=1000):img_input = Input(shape=input_shape)x = ZeroPadding2D((3, 3))(img_input)x = Conv2D(64, (7, 7), strides=(2, 2), name='conv1')(x)x = BatchNormalization(name='bn_conv1')(x)x = Activation('relu')(x)x = MaxPooling2D((3, 3), strides=(2, 2))(x)x = conv_block(x, 3, [64, 64, 256], stage=2, block='a', strides=(1, 1))x = identity_block(x, 3, [64, 64, 256], stage=2, block='b')x = identity_block(x, 3, [64, 64, 256], stage=2, block='c')x = conv_block(x, 3, [128, 128, 512], stage=3, block='a')x = identity_block(x, 3, [128, 128, 512], stage=3, block='b')x = identity_block(x, 3, [128, 128, 512], stage=3, block='c')x = identity_block(x, 3, [128, 128, 512], stage=3, block='d')x = conv_block(x, 3, [256, 256, 1024], stage=4, block='a')x = identity_block(x, 3, [256, 256, 1024], stage=4, block='b')x = identity_block(x, 3, [256, 256, 1024], stage=4, block='c')x = identity_block(x, 3, [256, 256, 1024], stage=4, block='d')x = identity_block(x, 3, [256, 256, 1024], stage=4, block='e')x = identity_block(x, 3, [256, 256, 1024], stage=4, block='f')x = conv_block(x, 3, [512, 512, 2048], stage=5, block='a')x = identity_block(x, 3, [512, 512, 2048], stage=5, block='b')x = identity_block(x, 3, [512, 512, 2048], stage=5, block='c')x = AveragePooling2D((7, 7), name='avg_pool')(x)x = Flatten()(x)x = Dense(classes, activation='softmax', name='fc1000')(x)model = Model(img_input, x, name='resnet50')# 加载预训练模型model.load_weights('./resnet50_weights_tf_dim_ordering_tf_kernels.h5')return modelmodel = ResNet50()

model.summary()

Model: "resnet50"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━┓

┃ Layer (type) ┃ Output Shape ┃ Param # ┃ Connected to ┃

┡━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━┩

│ input_layer (InputLayer) │ (None, 224, 224, 3) │ 0 │ - │

├───────────────────────────┼────────────────────────┼────────────────┼────────────────────────┤

│ zero_padding2d │ (None, 230, 230, 3) │ 0 │ input_layer[0][0] │

│ (ZeroPadding2D) │ │ │ │

├───────────────────────────┼────────────────────────┼────────────────┼────────────────────────┤

│ conv1 (Conv2D) │ (None, 112, 112, 64) │ 9,472 │ zero_padding2d[0][0] │

├───────────────────────────┼────────────────────────┼────────────────┼────────────────────────┤

│ bn_conv1 │ (None, 112, 112, 64) │ 256 │ conv1[0][0] │

│ (BatchNormalization) │ │ │ │

├───────────────────────────┼────────────────────────┼────────────────┼────────────────────────┤

│ activation (Activation) │ (None, 112, 112, 64) │ 0 │ bn_conv1[0][0] │

├───────────────────────────┼────────────────────────┼────────────────┼────────────────────────┤

│ max_pooling2d │ (None, 55, 55, 64) │ 0 │ activation[0][0] │

│ (MaxPooling2D) │ │ │ │

..............................................................

..............................................................

..............................................................

├───────────────────────────┼────────────────────────┼────────────────┼────────────────────────┤

│ avg_pool │ (None, 1, 1, 2048) │ 0 │ 5c_identity_block_rel… │

│ (AveragePooling2D) │ │ │ │

├───────────────────────────┼────────────────────────┼────────────────┼────────────────────────┤

│ flatten (Flatten) │ (None, 2048) │ 0 │ avg_pool[0][0] │

├───────────────────────────┼────────────────────────┼────────────────┼────────────────────────┤

│ fc1000 (Dense) │ (None, 1000) │ 2,049,000 │ flatten[0][0] │

└───────────────────────────┴────────────────────────┴────────────────┴────────────────────────┘Total params: 25,636,712 (97.80 MB)Trainable params: 25,583,592 (97.59 MB)Non-trainable params: 53,120 (207.50 KB)

四、编译

opt = tf.keras.optimizers.Adam(learning_rate=1e-4)

model.compile(optimizer=opt,loss='sparse_categorical_crossentropy',metrics=['accuracy'])

五、训练模型

epochs = 10

history = model.fit(train_ds,validation_data=val_ds,epochs=epochs

)

Epoch 1/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 269s 1s/step - accuracy: 0.5021 - loss: 3.6748 - val_accuracy: 0.9646 - val_loss: 0.1640

Epoch 2/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 6s 99ms/step - accuracy: 0.9636 - loss: 0.2068 - val_accuracy: 0.9823 - val_loss: 0.0241

Epoch 3/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 6s 97ms/step - accuracy: 0.9800 - loss: 0.0443 - val_accuracy: 0.9912 - val_loss: 0.0115

Epoch 4/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 10s 98ms/step - accuracy: 0.9943 - loss: 0.0286 - val_accuracy: 0.9912 - val_loss: 0.0183

Epoch 5/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 11s 104ms/step - accuracy: 0.9945 - loss: 0.0377 - val_accuracy: 1.0000 - val_loss: 0.0108

Epoch 6/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 10s 100ms/step - accuracy: 0.9995 - loss: 0.0038 - val_accuracy: 0.9735 - val_loss: 0.0359

Epoch 7/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 10s 104ms/step - accuracy: 1.0000 - loss: 0.0024 - val_accuracy: 0.9912 - val_loss: 0.0196

Epoch 8/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 10s 98ms/step - accuracy: 1.0000 - loss: 6.2409e-04 - val_accuracy: 0.9912 - val_loss: 0.0139

Epoch 9/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 6s 106ms/step - accuracy: 1.0000 - loss: 5.9430e-04 - val_accuracy: 1.0000 - val_loss: 0.0103

Epoch 10/10

57/57 ━━━━━━━━━━━━━━━━━━━━ 6s 99ms/step - accuracy: 1.0000 - loss: 3.5871e-04 - val_accuracy: 1.0000 - val_loss: 0.0094

六、模型评估

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']loss = history.history['loss']

val_loss = history.history['val_loss']epochs_range = range(epochs)plt.figure(figsize=(12, 4))plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

七、预测

plt.figure(figsize=(10, 4))for images, labels in train_ds.take(1):for i in range(8):ax = plt.subplot(2, 4, i+1)plt.imshow(images[i].numpy().astype('uint8'))img_array = tf.expand_dims(images[i], 0)predictions = model.predict(img_array)plt.title(class_names[np.argmax(predictions)])plt.axis('off')

八、总结

在一般的卷积神经网络中,由于深度的增加,可能会带来梯度爆炸,梯度消失,ResNet的残差网络结构可以有效解决这些问题。