一、本文介绍

本文记录的是利用D-LKA模块优化YOLOv10的目标检测网络模型。D-LKA 结合了大卷积核的广阔感受野和可变形卷积的灵活性,有效地处理复杂的图像信息。本文将其应用到v11中,并进行二次创新,使网络能够综合多种维度信息,更好地突出重要特征,从而提升对不同尺度目标和不规则形状目标的特征提取能力。

专栏目录:YOLOv10改进目录一览 | 涉及卷积层、轻量化、注意力、损失函数、Backbone、SPPF、Neck、检测头等全方位改进

专栏地址:YOLOv10改进专栏——以发表论文的角度,快速准确的找到有效涨点的创新点!

文章目录

- 一、本文介绍

- 二、D-LKA介绍

- 2.1 设计出发点

- 2.2 原理

- 2.2.1 Large Kernel Attention(LKA)原理

- 2.2.2 Deformable Large Kernel Attention(D - LKA)原理

- 2.3 结构

- 2.3.1 2D D - LKA模块结构

- 2.3.2 3D D - LKA模块结构

- 2.3.3 基于D - LKA模块的网络结构

- 2.4 优势

- 三、D-LKA的实现代码

- 四、创新模块

- 4.1 改进点1⭐

- 4.2 改进点2⭐

- 五、添加步骤

- 5.1 修改一

- 5.2 修改二

- 5.3 修改三

- 六、yaml模型文件

- 6.1 模型改进版本1

- 6.2 模型改进版本2⭐

- 七、成功运行结果

二、D-LKA介绍

2.1 设计出发点

- 解决传统卷积和注意力机制的局限性

- 传统卷积神经网络(CNN)在处理图像分割时,对于不同尺度的物体检测存在问题。如果物体超出对应网络层的感受野,会导致分割不足;而过大的感受野相比物体实际大小,背景信息可能会对预测产生不当影响。

Vision Transformer(ViT)虽然能通过注意力机制聚合全局信息,但在有效建模局部信息方面存在局限,难以检测局部纹理。

- 充分利用体积上下文并提高计算效率

- 大多数当前方法处理三维体积图像数据时采用逐片处理的方式(伪3D),丢失了关键的片间信息,降低了模型的整体性能。

- 需要一种既能充分理解体积上下文,又能避免计算开销过大的方法,同时还要考虑医学领域中病变形状经常变形的特点。

2.2 原理

2.2.1 Large Kernel Attention(LKA)原理

- 相似感受野的构建:大卷积核可以通过

深度可分离卷积(depth-wise convolution)、深度可分离空洞卷积(depthwise dilated convolution)和1×1卷积来构建,其能提供与自注意力机制相似的感受野,但参数和计算量更少。 - 参数和计算量计算

- 对于二维输入(维度为 H × W H×W H×W,通道为 c c c),

深度可分离卷积核大小 D W = ( 2 d − 1 ) × ( 2 d − 1 ) DW=(2d - 1)×(2d - 1) DW=(2d−1)×(2d−1),深度可分离空洞卷积核大小 D W − D = ⌈ K d ⌉ × ⌈ K d ⌉ DW - D=\left\lceil\frac{K}{d}\right\rceil×\left\lceil\frac{K}{d}\right\rceil DW−D=⌈dK⌉×⌈dK⌉( K K K为目标卷积核大小, d d d为空洞率)。参数量: P ( K , d ) = C ( ⌈ K d ⌉ 2 + ( 2 d − 1 ) 2 + 3 + C ) P(K, d)=C(\left\lceil\frac{K}{d}\right\rceil^{2}+(2d - 1)^{2}+3 + C) P(K,d)=C(⌈dK⌉2+(2d−1)2+3+C),浮点运算次数(FLOPs): F ( K , d ) = P ( K , d ) × H × W F(K, d)=P(K, d)×H×W F(K,d)=P(K,d)×H×W。 - 对于三维输入(维度为 H × W × D H×W×D H×W×D,通道为 c c c),参数量: P 3 d ( K , d ) = C ( ⌈ K d ⌉ 3 + ( 2 d − 1 ) 3 + 3 + C ) P_{3d}(K, d)=C(\left\lceil\frac{K}{d}\right\rceil^{3}+(2d - 1)^{3}+3 + C) P3d(K,d)=C(⌈dK⌉3+(2d−1)3+3+C),FLOPs: F 3 d ( K , d ) = P 3 d ( K , d ) × H × W × D F_{3d}(K, d)=P_{3d}(K, d)×H×W×D F3d(K,d)=P3d(K,d)×H×W×D。

- 对于二维输入(维度为 H × W H×W H×W,通道为 c c c),

2.2.2 Deformable Large Kernel Attention(D - LKA)原理

- 引入可变形卷积:在LKA的基础上引入

可变形卷积(Deformable Convolutions),可变形卷积能够通过整数偏移量调整采样网格,实现自由变形。 - 自适应卷积核的形成:一个额外的卷积层从特征图中学习变形,创建一个偏移场,基于特征本身学习变形会产生一个自适应卷积核,这种灵活的核形状可以改善对变形物体的表示,从而增强对物体边界的定义。

2.3 结构

2.3.1 2D D - LKA模块结构

- 整体结构:包含

LayerNorm、deformable LKA和Multi - Layer Perceptron(MLP),并集成了残差连接,以确保有效的特征传播。 - 计算公式

- x 1 = D − L K A − A t t n ( L N ( x i n ) ) + x i n x_{1}=D - LKA - Attn\left(LN\left(x_{in}\right)\right)+x_{in} x1=D−LKA−Attn(LN(xin))+xin

- x o u t = M L P ( L N ( x 1 ) ) + x 1 x_{out}=MLP\left(LN\left(x_{1}\right)\right)+x_{1} xout=MLP(LN(x1))+x1

- M L P = C o n v 1 ( G e L U ( C o n v d ( C o n v 1 ( x ) ) ) ) MLP=Conv_{1}\left(GeLU\left(Conv_{d}\left(Conv_{1}(x)\right)\right)\right) MLP=Conv1(GeLU(Convd(Conv1(x))))(其中 x i n x_{in} xin为输入特征, L N LN LN为层归一化, D − L K A − A t t n D - LKA - Attn D−LKA−Attn为可变形大核注意力, C o n v d Convd Convd为深度卷积, C o n v 1 Conv1 Conv1为线性层, G e L U GeLU GeLU为激活函数)

2.3.2 3D D - LKA模块结构

- 整体结构:包括

层归一化和D - LKA Attention,后面跟着应用了残差连接的3×3×3卷积层和1×1×1卷积层。 - 计算公式

- x 1 = D A t t n ( L N ( x i n ) ) + x i n x_{1}=D Attn\left(LN\left(x_{in}\right)\right)+x_{in} x1=DAttn(LN(xin))+xin

- x o u t = C o n v 1 ( C o n v 3 ( x 1 ) ) + x 1 x_{out}=Conv_{1}\left(Conv_{3}\left(x_{1}\right)\right)+x_{1} xout=Conv1(Conv3(x1))+x1(其中 x i n x_{in} xin为输入特征, L N LN LN为

层归一化, D A t t n D Attn DAttn为可变形大核注意力, C o n v 1 Conv_{1} Conv1为线性层, C o n v 3 Conv_{3} Conv3为包含两个卷积层和激活函数的前馈网络, x o u t x_{out} xout为输出特征)

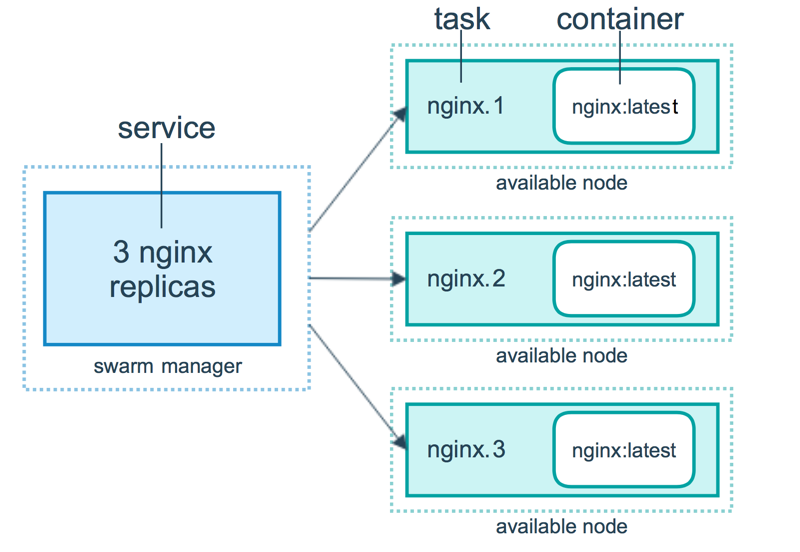

2.3.3 基于D - LKA模块的网络结构

- 2D D - LKA Net

- 编码器:使用

MaxViT作为编码器组件进行高效特征提取,首先通过卷积干将输入图像维度降低到 H 4 × W 4 × C \frac{H}{4}×\frac{W}{4}×C 4H×4W×C,然后通过四个阶段的MaxViT块进行特征提取,每个阶段后跟着下采样层。 - 解码器:包含四个阶段的

D - LKA层,每个阶段有两个D - LKA块,接着是patch - expanding层用于分辨率上采样和通道维度降低,最后通过线性层生成最终输出。

- 编码器:使用

- 3D D - LKA Net

- 编码器 - 解码器设计:使用patch embedding层将输入图像维度从 ( H × W × D ) (H×W×D) (H×W×D)降低到 ( H 4 × W 4 × D 2 ) (\frac{H}{4}×\frac{W}{4}×\frac{D}{2}) (4H×4W×2D),编码器内有三个

D-LKA阶段,每个阶段包含三个D-LKA块,每个阶段后进行下采样,中央瓶颈包含两组D-LKA块。 - 解码器结构与编码器对称,使用转置卷积来双倍特征分辨率并降低通道计数,每个解码器阶段使用三个

D-LKA块促进长程特征依赖,最终通过3×3×3和1×1×1卷积层生成分割输出,并通过卷积形成跳连接。

- 编码器 - 解码器设计:使用patch embedding层将输入图像维度从 ( H × W × D ) (H×W×D) (H×W×D)降低到 ( H 4 × W 4 × D 2 ) (\frac{H}{4}×\frac{W}{4}×\frac{D}{2}) (4H×4W×2D),编码器内有三个

2.4 优势

- 有效处理上下文信息和局部描述符:

D-LKA模块在架构中平衡了上下文信息处理和局部描述符保留,能够实现精确的语义分割。 - 动态适应感受野:基于数据动态调整感受野,克服了传统卷积操作中固定滤波器掩码的固有局限性。

- 适用于2D和3D数据:开发了2D和3D版本的

D-LKA Net架构,3D模型的D-LKA机制适合3D上下文,能够在不同体积之间无缝交换信息。 - 计算效率高:仅依靠

D-LKA概念实现了计算效率,在各种分割基准测试中取得了优异性能,确立了该方法作为一种新的SOTA方法。同时,可变形LKA虽然增加了模型的参数和FLOPs,但在批量处理时,由于其高效的实现方式,甚至可以观察到推理时间的减少。

论文:https://arxiv.org/pdf/2309.00121.pdf

源码: https://github.com/mindflow-institue/deformableLKA

三、D-LKA的实现代码

D-LKA及其改进的实现代码如下:

import torch

import torch.nn as nn

import torchvision

from ultralytics.utils.torch_utils import fuse_conv_and_bnclass DeformConv(nn.Module):def __init__(self, in_channels, groups, kernel_size=(3, 3), padding=1, stride=1, dilation=1, bias=True):super(DeformConv, self).__init__()self.offset_net = nn.Conv2d(in_channels=in_channels,out_channels=2 * kernel_size[0] * kernel_size[1],kernel_size=kernel_size,padding=padding,stride=stride,dilation=dilation,bias=True)self.deform_conv = torchvision.ops.DeformConv2d(in_channels=in_channels,out_channels=in_channels,kernel_size=kernel_size,padding=padding,groups=groups,stride=stride,dilation=dilation,bias=False)def forward(self, x):offsets = self.offset_net(x)out = self.deform_conv(x, offsets)return outclass deformable_LKA(nn.Module):def __init__(self, dim):super().__init__()self.conv0 = DeformConv(dim, kernel_size=(5,5), padding=2, groups=dim)self.conv_spatial = DeformConv(dim, kernel_size=(7,7), stride=1, padding=9, groups=dim, dilation=3)self.conv1 = nn.Conv2d(dim, dim, 1)def forward(self, x):u = x.clone()attn = self.conv0(x)attn = self.conv_spatial(attn)attn = self.conv1(attn)return u * attnclass deformable_LKA_Attention(nn.Module):def __init__(self, d_model):super().__init__()self.proj_1 = nn.Conv2d(d_model, d_model, 1)self.activation = nn.GELU()self.spatial_gating_unit = deformable_LKA(d_model)self.proj_2 = nn.Conv2d(d_model, d_model, 1)def forward(self, x):shorcut = x.clone()x = self.proj_1(x)x = self.activation(x)x = self.spatial_gating_unit(x)x = self.proj_2(x)x = x + shorcutreturn xdef autopad(k, p=None, d=1): # kernel, padding, dilation"""Pad to 'same' shape outputs."""if d > 1:k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-sizeif p is None:p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-padreturn pclass Conv(nn.Module):"""Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)."""default_act = nn.SiLU() # default activationdef __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):"""Initialize Conv layer with given arguments including activation."""super().__init__()self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)self.bn = nn.BatchNorm2d(c2)self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()def forward(self, x):"""Apply convolution, batch normalization and activation to input tensor."""return self.act(self.bn(self.conv(x)))def forward_fuse(self, x):"""Perform transposed convolution of 2D data."""return self.act(self.conv(x))class PSA_DLKA(nn.Module):def __init__(self, c1, c2, e=0.5):super().__init__()assert(c1 == c2)self.c = int(c1 * e)self.cv1 = Conv(c1, 2 * self.c, 1, 1)self.cv2 = Conv(2 * self.c, c1, 1)self.attn = deformable_LKA_Attention(self.c)self.ffn = nn.Sequential(Conv(self.c, self.c*2, 1),Conv(self.c*2, self.c, 1, act=False))def forward(self, x):a, b = self.cv1(x).split((self.c, self.c), dim=1)b = b + self.attn(b)b = b + self.ffn(b)return self.cv2(torch.cat((a, b), 1))class Bottleneck(nn.Module):"""Standard bottleneck."""def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):"""Initializes a standard bottleneck module with optional shortcut connection and configurable parameters."""super().__init__()c_ = int(c2 * e) # hidden channelsself.cv1 = Conv(c1, c_, k[0], 1)self.cv2 = Conv(c_, c2, k[1], 1, g=g)self.add = shortcut and c1 == c2def forward(self, x):"""Applies the YOLO FPN to input data."""return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))class C2f(nn.Module):"""Faster Implementation of CSP Bottleneck with 2 convolutions."""def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):"""Initializes a CSP bottleneck with 2 convolutions and n Bottleneck blocks for faster processing."""super().__init__()self.c = int(c2 * e) # hidden channelsself.cv1 = Conv(c1, 2 * self.c, 1, 1)self.cv2 = Conv((2 + n) * self.c, c2, 1) # optional act=FReLU(c2)self.m = nn.ModuleList(Bottleneck(self.c, self.c, shortcut, g, k=((3, 3), (3, 3)), e=1.0) for _ in range(n))def forward(self, x):"""Forward pass through C2f layer."""y = list(self.cv1(x).chunk(2, 1))y.extend(m(y[-1]) for m in self.m)return self.cv2(torch.cat(y, 1))def forward_split(self, x):"""Forward pass using split() instead of chunk()."""y = list(self.cv1(x).split((self.c, self.c), 1))y.extend(m(y[-1]) for m in self.m)return self.cv2(torch.cat(y, 1))class RepVGGDW(torch.nn.Module):def __init__(self, ed) -> None:super().__init__()self.conv = Conv(ed, ed, 7, 1, 3, g=ed, act=False)self.conv1 = Conv(ed, ed, 3, 1, 1, g=ed, act=False)self.dim = edself.act = nn.SiLU()def forward(self, x):return self.act(self.conv(x) + self.conv1(x))def forward_fuse(self, x):return self.act(self.conv(x))@torch.no_grad()def fuse(self):conv = fuse_conv_and_bn(self.conv.conv, self.conv.bn)conv1 = fuse_conv_and_bn(self.conv1.conv, self.conv1.bn)conv_w = conv.weightconv_b = conv.biasconv1_w = conv1.weightconv1_b = conv1.biasconv1_w = torch.nn.functional.pad(conv1_w, [2,2,2,2])final_conv_w = conv_w + conv1_wfinal_conv_b = conv_b + conv1_bconv.weight.data.copy_(final_conv_w)conv.bias.data.copy_(final_conv_b)self.conv = convdel self.conv1class CIB(nn.Module):"""Standard bottleneck."""def __init__(self, c1, c2, shortcut=True, e=0.5, lk=False):"""Initializes a bottleneck module with given input/output channels, shortcut option, group, kernels, andexpansion."""super().__init__()c_ = int(c2 * e) # hidden channelsself.cv1 = nn.Sequential(Conv(c1, c1, 3, g=c1),Conv(c1, 2 * c_, 1),Conv(2 * c_, 2 * c_, 3, g=2 * c_) if not lk else RepVGGDW(2 * c_),Conv(2 * c_, c2, 1),Conv(c2, c2, 3, g=c2),deformable_LKA_Attention(c_))self.add = shortcut and c1 == c2def forward(self, x):"""'forward()' applies the YOLO FPN to input data."""return x + self.cv1(x) if self.add else self.cv1(x)class C2fCIB_DLKA(C2f):"""Faster Implementation of CSP Bottleneck with 2 convolutions."""def __init__(self, c1, c2, n=1, shortcut=False, lk=False, g=1, e=0.5):"""Initialize CSP bottleneck layer with two convolutions with arguments ch_in, ch_out, number, shortcut, groups,expansion."""super().__init__(c1, c2, n, shortcut, g, e)self.m = nn.ModuleList(CIB(self.c, self.c, shortcut, e=1.0, lk=lk) for _ in range(n))四、创新模块

4.1 改进点1⭐

模块改进方法:基于DLKA模块的PSA(第五节讲解添加步骤)。

第一种改进方法是对YOLOv10中的PSA模块进行改进,并将DLKA在加入到PSA模块中。

改进代码如下:

对PSA模块进行改进,加入DLKA模块,并重命名为PSA_DLKA

class PSA_DLKA(nn.Module):def __init__(self, c1, c2, e=0.5):super().__init__()assert(c1 == c2)self.c = int(c1 * e)self.cv1 = Conv(c1, 2 * self.c, 1, 1)self.cv2 = Conv(2 * self.c, c1, 1)self.attn = deformable_LKA_Attention(self.c)self.ffn = nn.Sequential(Conv(self.c, self.c*2, 1),Conv(self.c*2, self.c, 1, act=False))def forward(self, x):a, b = self.cv1(x).split((self.c, self.c), dim=1)b = b + self.attn(b)b = b + self.ffn(b)return self.cv2(torch.cat((a, b), 1))

4.2 改进点2⭐

模块改进方法:基于DLKA模块的C2fCIB(第五节讲解添加步骤)。

第二种改进方法是对YOLOv11中的C2fCIB模块进行改进,并将DLKA在加入到C2fCIB模块中。

改进代码如下:

首先,对CIB模块进行改进,加入DLKA模块

class CIB(nn.Module):"""Standard bottleneck."""def __init__(self, c1, c2, shortcut=True, e=0.5, lk=False):"""Initializes a bottleneck module with given input/output channels, shortcut option, group, kernels, andexpansion."""super().__init__()c_ = int(c2 * e) # hidden channelsself.cv1 = nn.Sequential(Conv(c1, c1, 3, g=c1),Conv(c1, 2 * c_, 1),Conv(2 * c_, 2 * c_, 3, g=2 * c_) if not lk else RepVGGDW(2 * c_),Conv(2 * c_, c2, 1),Conv(c2, c2, 3, g=c2),deformable_LKA_Attention(c_))self.add = shortcut and c1 == c2def forward(self, x):"""'forward()' applies the YOLO FPN to input data."""return x + self.cv1(x) if self.add else self.cv1(x)

然后,将C2fCIB重命名为C2fCIB_DLKA

class C2fCIB_DLKA(C2f):"""Faster Implementation of CSP Bottleneck with 2 convolutions."""def __init__(self, c1, c2, n=1, shortcut=False, lk=False, g=1, e=0.5):"""Initialize CSP bottleneck layer with two convolutions with arguments ch_in, ch_out, number, shortcut, groups,expansion."""super().__init__(c1, c2, n, shortcut, g, e)self.m = nn.ModuleList(CIB(self.c, self.c, shortcut, e=1.0, lk=lk) for _ in range(n))

注意❗:在第五小节中需要声明的模块名称为:PSA_DLKA和C2fCIB_DLKA。

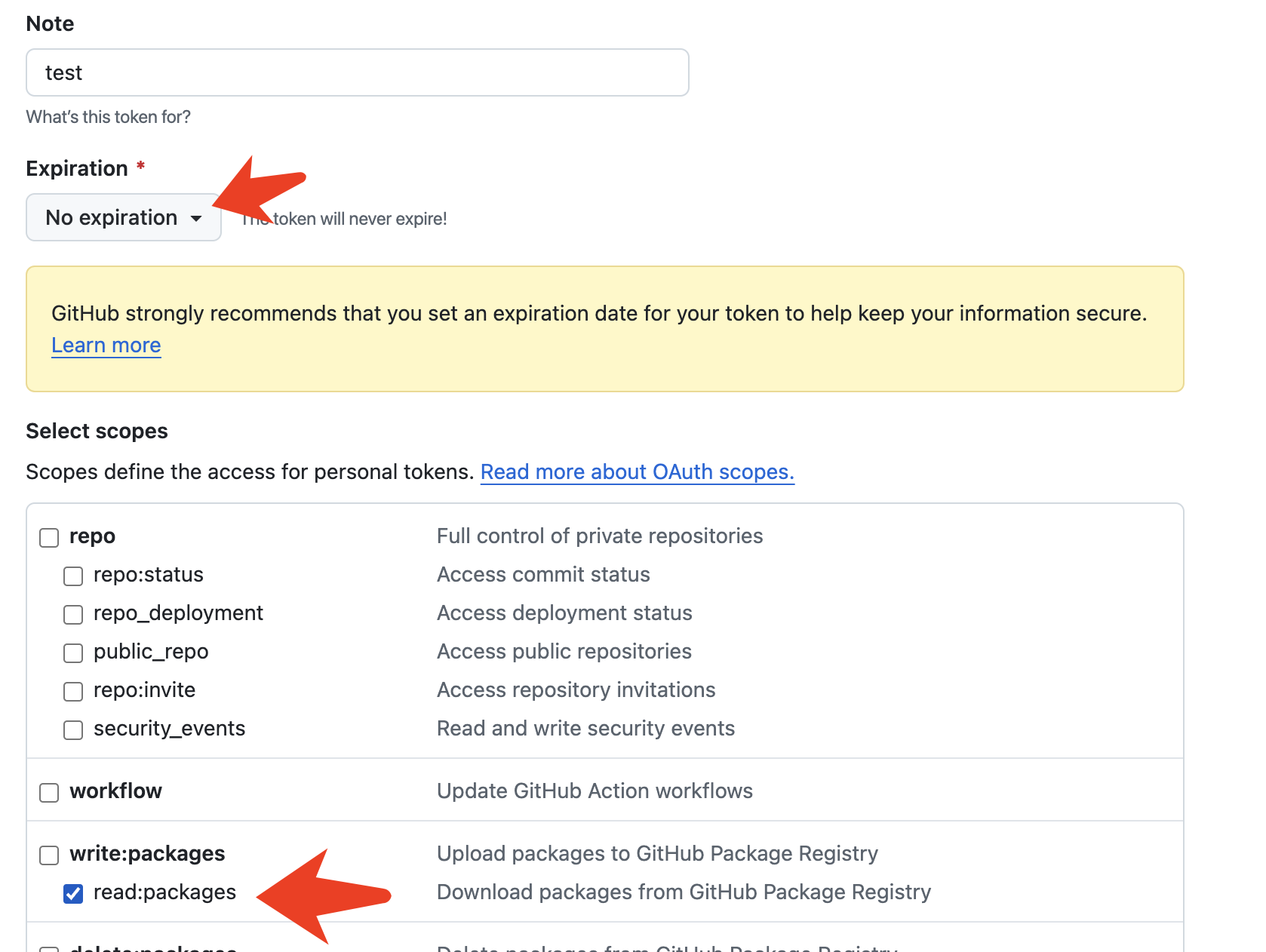

五、添加步骤

5.1 修改一

① 在ultralytics/nn/目录下新建AddModules文件夹用于存放模块代码

② 在AddModules文件夹下新建DLKA.py,将第三节中的代码粘贴到此处

5.2 修改二

在AddModules文件夹下新建__init__.py(已有则不用新建),在文件内导入模块:from .DLKA import *

5.3 修改三

在ultralytics/nn/modules/tasks.py文件中,需要在两处位置添加各模块类名称。

首先:导入模块

其次:在parse_model函数中注册PSA_DLKA和C2fCIB_DLKA模块

六、yaml模型文件

6.1 模型改进版本1

此处以ultralytics/cfg/models/v10/yolov10m.yaml为例,在同目录下创建一个用于自己数据集训练的模型文件yolov10m-PSA_DLKA.yaml。

将yolov10m.yaml中的内容复制到yolov10m-PSA_DLKA.yaml文件下,修改nc数量等于自己数据中目标的数量。

📌 模型的修改方法是将骨干网络中的PSA替换成PSA_DLKA。

# Parameters

nc: 1 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'# [depth, width, max_channels]s: [0.33, 0.50, 1024]backbone:# [from, repeats, module, args]- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4- [-1, 3, C2f, [128, True]]- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8- [-1, 6, C2f, [256, True]]- [-1, 1, SCDown, [512, 3, 2]] # 5-P4/16- [-1, 6, C2f, [512, True]]- [-1, 1, SCDown, [1024, 3, 2]] # 7-P5/32- [-1, 3, C2fCIB, [1024, True, True]]- [-1, 1, SPPF, [1024, 5]] # 9- [-1, 1, PSA_DLKA, [1024]] # 10# YOLOv8.0n head

head:- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 3, C2f, [512]] # 13- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 3, C2f, [256]] # 16 (P3/8-small)- [-1, 1, Conv, [256, 3, 2]]- [[-1, 13], 1, Concat, [1]] # cat head P4- [-1, 3, C2f, [512]] # 19 (P4/16-medium)- [-1, 1, SCDown, [512, 3, 2]]- [[-1, 10], 1, Concat, [1]] # cat head P5- [-1, 3, C2fCIB, [1024, True, True]] # 22 (P5/32-large)- [[16, 19, 22], 1, v10Detect, [nc]] # Detect(P3, P4, P5)6.2 模型改进版本2⭐

此处以ultralytics/cfg/models/v10/yolov10m.yaml为例,在同目录下创建一个用于自己数据集训练的模型文件yolov10m-C2fCIB_DLKA .yaml。

将yolov10m.yaml中的内容复制到yolov10m-C2fCIB_DLKA .yaml文件下,修改nc数量等于自己数据中目标的数量。

📌 模型的修改方法是将骨干网络中的C2fCIB模块替换成C2fCIB_DLKA 模块。

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect# yolo task=detect mode=train model=yolov11m.yaml data=data.yaml device=0 epochs=300 batch=16 imgsz=640 workers=10# Parameters

nc: 1 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'# [depth, width, max_channels]n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPss: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPsm: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPsl: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPsx: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs# YOLO11n backbone

backbone:# [from, repeats, module, args]- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4- [-1, 2, C3k2_LSKA, [256, False, 0.25]]- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8- [-1, 2, C3k2_LSKA, [512, False, 0.25]]- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16- [-1, 2, C3k2_LSKA, [512, True]]- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32- [-1, 2, C3k2_LSKA, [1024, True]]- [-1, 1, SPPF, [1024, 5]] # 9- [-1, 2, C2PSA, [1024]] # 10# YOLO11n head

head:- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 2, C3k2, [512, False]] # 13- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)- [-1, 1, Conv, [256, 3, 2]]- [[-1, 13], 1, Concat, [1]] # cat head P4- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)- [-1, 1, Conv, [512, 3, 2]]- [[-1, 10], 1, Concat, [1]] # cat head P5- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)七、成功运行结果

打印网络模型可以看到PSA_DLKA和C2fCIB_DLKA 已经加入到模型中,并可以进行训练了。

YOLOv10m-PSA_DLKA:

YOLOv10m-PSA_DLKA summary: 507 layers, 19377722 parameters, 19377706 gradients, 68.7 GFLOPs

from n params module arguments 0 -1 1 1392 ultralytics.nn.modules.conv.Conv [3, 48, 3, 2] 1 -1 1 41664 ultralytics.nn.modules.conv.Conv [48, 96, 3, 2] 2 -1 2 111360 ultralytics.nn.modules.block.C2f [96, 96, 2, True] 3 -1 1 166272 ultralytics.nn.modules.conv.Conv [96, 192, 3, 2] 4 -1 4 813312 ultralytics.nn.modules.block.C2f [192, 192, 4, True] 5 -1 1 78720 ultralytics.nn.modules.block.SCDown [192, 384, 3, 2] 6 -1 4 3248640 ultralytics.nn.modules.block.C2f [384, 384, 4, True] 7 -1 1 228672 ultralytics.nn.modules.block.SCDown [384, 576, 3, 2] 8 -1 2 1748736 ultralytics.nn.modules.block.C2fCIB [576, 576, 2, True, True] 9 -1 1 831168 ultralytics.nn.modules.block.SPPF [576, 576, 5] 10 -1 1 3013492 ultralytics.nn.AddModules.DLKA.PSA_DLKA [576, 576] 11 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest'] 12 [-1, 6] 1 0 ultralytics.nn.modules.conv.Concat [1] 13 -1 2 1993728 ultralytics.nn.modules.block.C2f [960, 384, 2] 14 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest'] 15 [-1, 4] 1 0 ultralytics.nn.modules.conv.Concat [1] 16 -1 2 517632 ultralytics.nn.modules.block.C2f [576, 192, 2] 17 -1 1 332160 ultralytics.nn.modules.conv.Conv [192, 192, 3, 2] 18 [-1, 13] 1 0 ultralytics.nn.modules.conv.Concat [1] 19 -1 2 1846272 ultralytics.nn.modules.block.C2f [576, 384, 2] 20 -1 1 152448 ultralytics.nn.modules.block.SCDown [384, 384, 3, 2] 21 [-1, 10] 1 0 ultralytics.nn.modules.conv.Concat [1] 22 -1 2 1969920 ultralytics.nn.modules.block.C2fCIB [960, 576, 2, True, True] 23 [16, 19, 22] 1 2282134 ultralytics.nn.modules.head.v10Detect [1, [192, 384, 576]]

YOLOv10m-PSA_DLKA summary: 507 layers, 19377722 parameters, 19377706 gradients, 68.7 GFLOPs

**YOLOv10m-C2fCIB_DLKA **:

YOLOv10m-C2fCIB_DLKA summary: 527 layers, 22252686 parameters, 22252670 gradients, 71.0 GFLOPs

from n params module arguments 0 -1 1 1392 ultralytics.nn.modules.conv.Conv [3, 48, 3, 2] 1 -1 1 41664 ultralytics.nn.modules.conv.Conv [48, 96, 3, 2] 2 -1 2 111360 ultralytics.nn.modules.block.C2f [96, 96, 2, True] 3 -1 1 166272 ultralytics.nn.modules.conv.Conv [96, 192, 3, 2] 4 -1 4 813312 ultralytics.nn.modules.block.C2f [192, 192, 4, True] 5 -1 1 78720 ultralytics.nn.modules.block.SCDown [192, 384, 3, 2] 6 -1 4 3248640 ultralytics.nn.modules.block.C2f [384, 384, 4, True] 7 -1 1 228672 ultralytics.nn.modules.block.SCDown [384, 576, 3, 2] 8 -1 2 6384104 ultralytics.nn.AddModules.DLKA.C2fCIB_DLKA [576, 576, True, True] 9 -1 1 831168 ultralytics.nn.modules.block.SPPF [576, 576, 5] 10 -1 1 1253088 ultralytics.nn.modules.block.PSA [576, 576] 11 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest'] 12 [-1, 6] 1 0 ultralytics.nn.modules.conv.Concat [1] 13 -1 2 1993728 ultralytics.nn.modules.block.C2f [960, 384, 2] 14 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest'] 15 [-1, 4] 1 0 ultralytics.nn.modules.conv.Concat [1] 16 -1 2 517632 ultralytics.nn.modules.block.C2f [576, 192, 2] 17 -1 1 332160 ultralytics.nn.modules.conv.Conv [192, 192, 3, 2] 18 [-1, 13] 1 0 ultralytics.nn.modules.conv.Concat [1] 19 -1 2 1846272 ultralytics.nn.modules.block.C2f [576, 384, 2] 20 -1 1 152448 ultralytics.nn.modules.block.SCDown [384, 384, 3, 2] 21 [-1, 10] 1 0 ultralytics.nn.modules.conv.Concat [1] 22 -1 2 1969920 ultralytics.nn.modules.block.C2fCIB [960, 576, 2, True, True] 23 [16, 19, 22] 1 2282134 ultralytics.nn.modules.head.v10Detect [1, [192, 384, 576]]

YOLOv10m-C2fCIB_DLKA summary: 527 layers, 22252686 parameters, 22252670 gradients, 71.0 GFLOPs