目录

- 1 多类别分类模型

- 1.1 创建数据

- 1.2 创建模型

- 1.3 模型传出的数据

- 1.4 损失函数和优化器

- 1.5 训练和测试

- 1.6 衡量模型性能的指标

- 2 练习Exercise

之前我们已经学习了 二分类问题,二分类就像抛硬币正面和反面,只有两种情况。

这里我们要探讨一个 多类别分类模型,比如输入一张图片,分类它是pizza、牛排或者寿司,这里的类别是三,就是多类别分类问题。

1 多类别分类模型

1.1 创建数据

# 创建数据

from sklearn.datasets import make_blobs

import torch

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

import torch.nn as nn

import torch.optim as optimRANDOM_SEED = 42

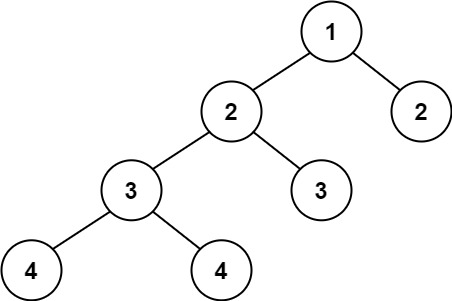

NUM_CLASSES = 4

NUM_FEATURES = 2# 创建多类别数据

X, y = make_blobs(n_samples = 1000,n_features = NUM_FEATURES, # X featurescenters = NUM_CLASSES, # y labelscluster_std = 1.5, # 让数据抖动random_state = RANDOM_SEED)# 将数据转换为 tensor

X = torch.from_numpy(X).type(torch.float)

y = torch.from_numpy(y).type(torch.LongTensor)print(X.dtype, y.dtype)

torch.float32 torch.int64

# 将数据集划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X,y,test_size=0.2,random_state=RANDOM_SEED)

len(X_train), len(y_train), len(X_test), len(y_test)

print(X_train[:5], y_train[:5])

tensor([[ 5.0405, 3.3076],

[-2.6249, 9.5260],

[-8.5240, -9.0402],

[-6.0262, -4.4375],

[-3.3397, 7.2175]]) tensor([1, 0, 2, 2, 0])

# 可视化数据

plt.figure(figsize=(10, 7))

plt.scatter(X[:,0], X[:,1], c=y, cmap=plt.cm.RdYlBu)

# 创建设备无关的代码

device = "cuda" if torch.cuda.is_available() else "cpu"

device

‘cuda’

1.2 创建模型

# 创建模型

# 这次创建模型特殊的点在于我们在 __init__()括号的参数选择中,多添加了参数

# input_features:输入 output_features:输出 hidden_units:隐藏层中神经元的个数

class BlobModel(nn.Module):def __init__(self, input_features, output_features, hidden_units=8):super().__init__()self.linear_stack = nn.Sequential(nn.Linear(in_features=input_features, out_features=hidden_units),nn.ReLU(),nn.Linear(in_features=hidden_units, out_features=hidden_units),nn.ReLU(),nn.Linear(in_features=hidden_units, out_features=output_features))def forward(self, x):return self.linear_stack(x)model_0 = BlobModel(input_features=NUM_FEATURES, output_features=NUM_CLASSES, hidden_units=8).to(device)

model_0

BlobModel(

(linear_stack): Sequential(

(0): Linear(in_features=2, out_features=8, bias=True)

(1): ReLU()

(2): Linear(in_features=8, out_features=8, bias=True)

(3): ReLU()

(4): Linear(in_features=8, out_features=4, bias=True)

)

)

# 将数据和模型统一到目标设备上

X_train, y_train = X_train.to(device), y_train.to(device)

X_test, y_test = X_test.to(device), y_test.to(device)1.3 模型传出的数据

# 先看一下模型会传出什么数据吧

model_0.eval()

with torch.inference_mode():y_logits = model_0(X_train)print(y_logits[:5])

print(y_train[:5])

tensor([[-0.7333, -0.0491, -0.1253, 0.2468],

[-0.6059, 0.0958, 0.1232, 0.0641],

[ 1.1539, 0.1951, -0.1268, -1.1032],

[ 0.6424, 0.1891, -0.1171, -0.7310],

[-0.4519, 0.1914, 0.0589, 0.0031]], device=‘cuda:0’)

tensor([1, 0, 2, 2, 0], device=‘cuda:0’)

很明显这里模型的输出和我们真实的输出是不一样的,是无法进行比较的,所以我们需要将logits -> prediction probabilities -> prediction labels

logits 就是我们模型原始的输出, prediction probabilities 是预测概率,表示我将这个数据预测为这个类别的概率,概率值最大的,那模型就可以认为数据被分为这个类最可靠最可信 prediction labels 预测标签,比如这里我们有四类数据,就是 [0, 1, 2, 3]

还记得我们之前是如何将 logits -> prediction probabilities 的吗?之前的二分类,我们使用的是sigmoid(),这里多类比分类,我们使用softmax方法

y_pred_probs = torch.softmax(y_logits, dim=1)

y_pred_probs

tensor([[0.1336, 0.2649, 0.2454, 0.3561],

[0.1420, 0.2863, 0.2943, 0.2774],

[0.5663, 0.2171, 0.1573, 0.0593],

…,

[0.1361, 0.2749, 0.3078, 0.2811],

[0.4910, 0.2455, 0.1806, 0.0829],

[0.1920, 0.3041, 0.2983, 0.2056]], device=‘cuda:0’)

# 上次二分类我们使用的 torch.round() 进行四舍五入, 那这里我们的多类别分类该如何进行呢?

# 由于这里四个类别,我们选取概率最大的值,找到概率最大值的下标位置,我们就知道它是哪个类别了

# 想起来,我们学过的tensor基础课了么

# 没错,就是 argmax() 返回最大值的位置

y_preds = torch.argmax(y_pred_probs[0])

print(y_preds)

tensor(3, device=‘cuda:0’)

这里的数据都是随机的,是没有训练的,所以效果是不太好的。

1.4 损失函数和优化器

# 创建损失函数

loss_fn = nn.CrossEntropyLoss()# 创建优化器

optimizer = optim.SGD(params=model_0.parameters(),lr=0.1)

# 定义一个计算Accuracy的函数

def accuracy_fn(y_true, y_pred):correct = torch.eq(y_true, y_pred).sum().item()return (correct / len(y_pred))*100

1.5 训练和测试

# 训练数据

# 设置训练周期

epochs = 100for epoch in range(epochs):# 模型训练model_0.train()y_logits = model_0(X_train)y_preds = torch.softmax(y_logits, dim=1).argmax(dim=1)loss = loss_fn(y_logits, y_train)acc = accuracy_fn(y_true=y_train,y_pred = y_preds)optimizer.zero_grad()loss.backward()optimizer.step()# 模型测试model_0.eval()with torch.inference_mode():test_logits = model_0(X_test)test_preds = torch.softmax(test_logits, dim=1).argmax(dim=1)test_loss = loss_fn(test_logits,y_test)test_acc = accuracy_fn(y_true=y_test,y_pred=test_preds)# 打印输出if epoch % 10 == 0:print(f"Epoch:{epoch} | Train Loss:{loss:.4f} | Train Accuracy:{acc:.2f}% | Test Loss:{test_loss:.4f} | Test Accuracy:{test_acc:.2f}%")

Epoch:0 | Train Loss:1.5929 | Train Accuracy:1.88% | Test Loss:1.2136 | Test Accuracy:31.50%

Epoch:10 | Train Loss:0.9257 | Train Accuracy:53.37% | Test Loss:0.8747 | Test Accuracy:72.00%

Epoch:20 | Train Loss:0.6246 | Train Accuracy:91.25% | Test Loss:0.5729 | Test Accuracy:97.00%

Epoch:30 | Train Loss:0.2904 | Train Accuracy:97.62% | Test Loss:0.2503 | Test Accuracy:99.00%

Epoch:40 | Train Loss:0.1271 | Train Accuracy:99.12% | Test Loss:0.1101 | Test Accuracy:99.00%

Epoch:50 | Train Loss:0.0771 | Train Accuracy:99.25% | Test Loss:0.0668 | Test Accuracy:99.00%

Epoch:60 | Train Loss:0.0576 | Train Accuracy:99.12% | Test Loss:0.0488 | Test Accuracy:99.00%

Epoch:70 | Train Loss:0.0479 | Train Accuracy:99.12% | Test Loss:0.0396 | Test Accuracy:99.50%

Epoch:80 | Train Loss:0.0422 | Train Accuracy:99.12% | Test Loss:0.0339 | Test Accuracy:99.50%

Epoch:90 | Train Loss:0.0385 | Train Accuracy:99.12% | Test Loss:0.0302 | Test Accuracy:99.50%

# 可视化

from helper_functions import plot_decision_boundary

plt.figure(figsize=(12, 6))

plt.subplot(1, 2, 1)

plt.title("Train")

plot_decision_boundary(model=model_0, X=X_train, y=y_train)

plt.subplot(1, 2, 2)

plt.title("Test")

plot_decision_boundary(model=model_0, X=X_test, y=y_test)

哇塞,这个分类很赞吧,但是看这个划分,我们可以观察出可能不使用非线性函数也可以,咱们把模型里面的ReLU()去掉就可以啦,大家自己去试试吧,这里就不赘述啦~

1.6 衡量模型性能的指标

衡量一个模型的性能,不只是有accuracy,还有其他的,比如说:

- F1 score

- Precison

- Recall

- Confusion matrix

- Accuracy

from torchmetrics import Accuracytorchmetrics_accuracy = Accuracy(task='multiclass', num_classes=4).to(device)# 计算准确度

torchmetrics_accuracy(test_preds, y_test)

tensor(0.9950, device=‘cuda:0’)

OK,我们还可以调用函数计算准确度呢!结束了这里的学习,开始我们的练习来检测我们的学习吧~

2 练习Exercise

1. Make a binary classification dataset with Scikit-Learn’s

make_moons()function.

* For consistency, the dataset should have 1000 samples and arandom_state=42.

* Turn the data into PyTorch tensors. Split the data into training and test sets usingtrain_test_splitwith 80% training and 20% testing.

from sklearn.datasets import make_moons

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

import torch

import torch.nn as nn

import torch.optim as optimRANDOM_SEED = 42X, y = make_moons(n_samples=1000,noise=0.03,random_state=RANDOM_SEED)X[:5], y[:5]

(array([[-0.00933187, 0.39098105],

[ 0.95457387, -0.47375583],

[ 0.9185256 , -0.42519648],

[ 0.41276802, -0.37638459],

[-0.84532016, 0.52879908]]),

array([1, 1, 1, 1, 0], dtype=int64))

# 可视化数据

plt.figure(figsize=(10, 7))

plt.scatter(X[:,0], X[:,1], c=y, cmap=plt.cm.RdYlBu)

查看数据,我们发现,特征输入是2,输出是1,哇,看图像,是个二分类喔,这个模型肯定是要用到非线性的.

# 将数据转换为 Tensor

X = torch.from_numpy(X).type(torch.float)

y = torch.from_numpy(y).type(torch.float)

print(X.dtype, y.dtype)

print(X[:5], y[:5])

torch.float32 torch.float32

tensor([[-0.0093, 0.3910],

[ 0.9546, -0.4738],

[ 0.9185, -0.4252],

[ 0.4128, -0.3764],

[-0.8453, 0.5288]]) tensor([1., 1., 1., 1., 0.])

# 将数据划分为训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y,test_size=0.2,random_state=RANDOM_SEED)

len(X_train), len(X_test), len(y_train), len(y_test)

(800, 200, 800, 200)

2. Build a model by subclassing

nn.Modulethat incorporates non-linear activation functions and is capable of fitting the data you created in 1.

* Feel free to use any combination of PyTorch layers (linear and non-linear) you want.

# 设备无关

device = "cuda" if torch.cuda.is_available() else "cpu"

device

‘cuda’

class MoonModel(nn.Module):def __init__(self, input_features, output_features, hidden_units):super().__init__()self.linear_stack = nn.Sequential(nn.Linear(in_features=input_features, out_features=hidden_units),# nn.ReLU(),nn.Tanh(),nn.Linear(in_features=hidden_units, out_features=hidden_units),# nn.ReLU(),nn.Tanh(),nn.Linear(in_features=hidden_units, out_features=output_features))def forward(self, x):return self.linear_stack(x)model_0 = MoonModel(input_features=2,output_features=1, hidden_units=10).to(device)

model_0

MoonModel(

(linear_stack): Sequential(

(0): Linear(in_features=2, out_features=10, bias=True)

(1): Tanh()

(2): Linear(in_features=10, out_features=10, bias=True)

(3): Tanh()

(4): Linear(in_features=10, out_features=1, bias=True)

)

)

3. Setup a binary classification compatible loss function and optimizer to use when training the model.

# 损失函数和优化器

loss_fn = nn.BCEWithLogitsLoss()

optimizer = optim.SGD(params=model_0.parameters(),lr=0.1)

4. Create a training and testing loop to fit the model you created in 2 to the data you created in 1.

* To measure model accuray, you can create your own accuracy function or use the accuracy function in TorchMetrics.

* Train the model for long enough for it to reach over 96% accuracy.

* The training loop should output progress every 10 epochs of the model’s training and test set loss and accuracy.

# 将数据都放到统一的设备上

X_train, y_train = X_train.to(device), y_train.to(device)

X_test, y_test = X_test.to(device), y_test.to(device)

# 计算正确率的函数

def accuracy_fn(y_true, y_pred):correct = torch.eq(y_true, y_pred).sum().item()return (correct / len(y_pred))*100

print(y_logits.shape, y_train.shape)

print(len(y_train))

torch.Size([240, 3]) torch.Size([800])

800

# 训练和测试# 设置训练周期

epochs = 1000for epoch in range(epochs):# 训练model_0.train()y_logits = model_0(X_train).squeeze()y_preds = torch.round(torch.sigmoid(y_logits))loss = loss_fn(y_logits, y_train)acc = accuracy_fn(y_true=y_train,y_pred=y_preds)optimizer.zero_grad()loss.backward()optimizer.step()# 测试model_0.eval()with torch.inference_mode():test_logits = model_0(X_test).squeeze()test_preds = torch.round(torch.sigmoid(test_logits))test_loss = loss_fn(test_logits,y_test)test_acc = accuracy_fn(y_true=y_test,y_pred=test_preds)# 打印输出if epoch % 10 == 0:print(f"Epoch:{epoch} | Train Loss:{loss:.4f} | Train Accuracy:{acc:.2f}% | Test Loss:{test_loss:.4f} | Test Accuracy:{test_acc:.2f}%")Epoch:0 | Train Loss:0.7151 | Train Accuracy:30.63% | Test Loss:0.7045 | Test Accuracy:34.50%

Epoch:10 | Train Loss:0.6395 | Train Accuracy:80.50% | Test Loss:0.6378 | Test Accuracy:76.00%

Epoch:20 | Train Loss:0.5707 | Train Accuracy:79.88% | Test Loss:0.5754 | Test Accuracy:75.00%

…

Epoch:990 | Train Loss:0.0172 | Train Accuracy:100.00% | Test Loss:0.0150 | Test Accuracy:100.00%

Output is truncated. View as a scrollable element or open in a text editor. Adjust cell output settings…

5. Make predictions with your trained model and plot them using the

plot_decision_boundary()function created in this notebook.

# 可视化

from helper_functions import plot_decision_boundary

plt.figure(figsize=(12, 6))

plt.subplot(1, 2, 1)

plt.title("Training")

plot_decision_boundary(model=model_0, X=X_train, y=y_train)

plt.subplot(1, 2, 2)

plt.title("Testing")

plot_decision_boundary(model=model_0, X=X_test, y=y_test)

6. Replicate the Tanh (hyperbolic tangent) activation function in pure PyTorch.

* Feel free to reference the ML cheatsheet website for the formula.

这个只需要将模型里面的ReLU()激活函数换为Tanh()即可

7. Create a multi-class dataset using the spirals data creation function from CS231n (see below for the code).

* Construct a model capable of fitting the data (you may need a combination of linear and non-linear layers).

* Build a loss function and optimizer capable of handling multi-class data (optional extension: use the Adam optimizer instead of SGD, you may have to experiment with different values of the learning rate to get it working).

* Make a training and testing loop for the multi-class data and train a model on it to reach over 95% testing accuracy (you can use any accuracy measuring function here that you like).

* Plot the decision boundaries on the spirals dataset from your model predictions, theplot_decision_boundary()function should work for this dataset too.

# Code for creating a spiral dataset from CS231n

import numpy as np

N = 100 # number of points per class

D = 2 # dimensionality

K = 3 # number of classes

X = np.zeros((N*K,D)) # data matrix (each row = single example)

y = np.zeros(N*K, dtype='uint8') # class labels

for j in range(K):ix = range(N*j,N*(j+1))r = np.linspace(0.0,1,N) # radiust = np.linspace(j*4,(j+1)*4,N) + np.random.randn(N)*0.2 # thetaX[ix] = np.c_[r*np.sin(t), r*np.cos(t)]y[ix] = j

# lets visualize the data

plt.scatter(X[:, 0], X[:, 1], c=y, s=40, cmap=plt.cm.Spectral)

plt.show()

# 创建 spiral 数据集

RANDOM_SEED = 42import numpy as np

N = 100 # 每一类点的数量

D = 2 # 维度

K = 3 # 类别的数量

X = np.zeros((N*K, D))

y = np.zeros(N*K, dtype='uint8') # 类别标签

for j in range(K):ix = range(N*j, N*(j+1))r = np.linspace(0.0, 1, N) # 半径t = np.linspace(j*4, (j+1)*4, N) + np.random.randn(N) * 0.2 # thetaX[ix] = np.c_[r*np.sin(t), r*np.cos(t)]y[ix] = j# 数据可视化

plt.scatter(X[:,0], X[:,1],c=y, s=40, cmap=plt.cm.Spectral)

plt.show()

print(X[:5],y[:5])

print(X.shape, y.shape)

[[0. 0. ]

[0.00183158 0.00993357]

[0.00362348 0.01987441]

[0.00290204 0.03016375]

[0.00458536 0.04014301]] [0 0 0 0 0]

(300, 2) (300,)

可以看出我们的数据,输入是2,输出是3,3个类别嘛

# 将数据转化为tensor

X = torch.from_numpy(X).type(torch.float)

y = torch.from_numpy(y).type(torch.LongTensor)

X.dtype, y.dtype

(torch.float32, torch.int64)

# 将数据划分为训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X,y,test_size=0.2,random_state=RANDOM_SEED)

len(X_train), len(X_test), len(y_train), len(y_test)

(240, 60, 240, 60)

len(X), len(y)

(300, 300)

# 设备无关

device = "cuda" if torch.cuda.is_available() else "cpu"

device

‘cuda’

# 将数据放到统一的设备上

X_train, y_train = X_train.to(device), y_train.to(device)

X_test, y_test = X_test.to(device), y_test.to(device)

# 创建模型

class SpiralModel(nn.Module):def __init__(self, input_features, output_features, hidden_layers):super().__init__()self.linear_stack = nn.Sequential(nn.Linear(in_features=input_features, out_features=hidden_layers),nn.Tanh(),nn.Linear(in_features=hidden_layers, out_features=hidden_layers),nn.Tanh(),nn.Linear(in_features=hidden_layers, out_features=output_features))def forward(self, x):return self.linear_stack(x)model_1 = SpiralModel(2, 3, 10).to(device)

model_1

SpiralModel(

(linear_stack): Sequential(

(0): Linear(in_features=2, out_features=10, bias=True)

(1): Tanh()

(2): Linear(in_features=10, out_features=10, bias=True)

(3): Tanh()

(4): Linear(in_features=10, out_features=3, bias=True)

)

)

# 损失函数和优化器

loss_fn = nn.CrossEntropyLoss()

optimizer = optim.Adam(params=model_1.parameters(),lr=0.1)

print(y_logits.shape)

print(y_train.shape)

print(y_preds)

torch.Size([800])

torch.Size([240])

tensor([1., 0., 0., 0., 1., 0., 1., 1., 0., 0., 1., 0., 0., 0., 1., 0., 0., 0.,

0., 1., 1., 1., 0., 1., 1., 1., 0., 0., 0., 0., 0., 0., 0., 1., 0., 0.,

1., 1., 1., 1., 1., 1., 0., 1., 0., 1., 0., 0., 0., 0., 0., 1., 0., 1.,

…

1., 1., 0., 0., 0., 0., 1., 1., 1., 0., 1., 0., 1., 0., 1., 1., 1., 0.,

0., 1., 0., 0., 1., 1., 1., 1., 0., 1., 0., 1., 0., 1., 1., 0., 0., 1.,

1., 1., 0., 1., 0., 0., 0., 1.], device=‘cuda:0’,

grad_fn=)

Output is truncated. View as a scrollable element or open in a text editor. Adjust cell output settings…

torch.manual_seed(RANDOM_SEED)

torch.cuda.manual_seed(RANDOM_SEED)# 训练和测试

epochs = 100for epoch in range(epochs):# 训练model_1.train()y_logits = model_1(X_train)y_preds = torch.softmax(y_logits, dim=1).argmax(dim=1)loss = loss_fn(y_logits, y_train)acc = accuracy_fn(y_true=y_train,y_pred=y_preds)optimizer.zero_grad()loss.backward()optimizer.step()# 测试model_1.eval()with torch.inference_mode():test_logits = model_1(X_test)test_preds = torch.softmax(test_logits, dim=1).argmax(dim=1)test_loss = loss_fn(test_logits, y_test)test_acc = accuracy_fn(y_true=y_test,y_pred=test_preds)# 打印输出if epoch % 10 == 0:print(f"Epoch:{epoch} | Train Loss:{loss:.4f} | Train Accuracy:{acc:.2f}% | Test Loss:{test_loss:.4f} | Test Accuracy:{test_acc:.2f}%")

Epoch:0 | Train Loss:1.1065 | Train Accuracy:35.00% | Test Loss:0.9883 | Test Accuracy:40.00%

Epoch:10 | Train Loss:0.7705 | Train Accuracy:54.58% | Test Loss:0.7651 | Test Accuracy:55.00%

Epoch:20 | Train Loss:0.5308 | Train Accuracy:76.25% | Test Loss:0.4966 | Test Accuracy:75.00%

Epoch:30 | Train Loss:0.2872 | Train Accuracy:93.33% | Test Loss:0.2097 | Test Accuracy:95.00%

Epoch:40 | Train Loss:0.1477 | Train Accuracy:95.00% | Test Loss:0.0998 | Test Accuracy:98.33%

Epoch:50 | Train Loss:0.0820 | Train Accuracy:97.50% | Test Loss:0.0472 | Test Accuracy:100.00%

Epoch:60 | Train Loss:0.0488 | Train Accuracy:99.17% | Test Loss:0.0247 | Test Accuracy:100.00%

Epoch:70 | Train Loss:0.0418 | Train Accuracy:97.92% | Test Loss:0.0127 | Test Accuracy:100.00%

Epoch:80 | Train Loss:0.0307 | Train Accuracy:99.17% | Test Loss:0.0168 | Test Accuracy:100.00%

Epoch:90 | Train Loss:0.0282 | Train Accuracy:99.17% | Test Loss:0.0060 | Test Accuracy:100.00%

# 可视化看一下

plt.figure(figsize=(12, 6))

plt.subplot(1, 2, 1)

plt.title("Training")

plot_decision_boundary(model=model_1, X=X_train, y=y_train)

plt.subplot(1, 2, 2)

plt.title("Testing")

plot_decision_boundary(model=model_1, X=X_test, y=y_test)

哇,看这个图像拟合的多么好,太厉害了!

This work is so good!

BB,今天的学习就到这里啦!

话说户部巷烤面筋尊嘟嘎嘎好吃捏

BB,如果文档对您有用的话,记得给我点个赞赞撒!

靴靴BB,谢谢BB~