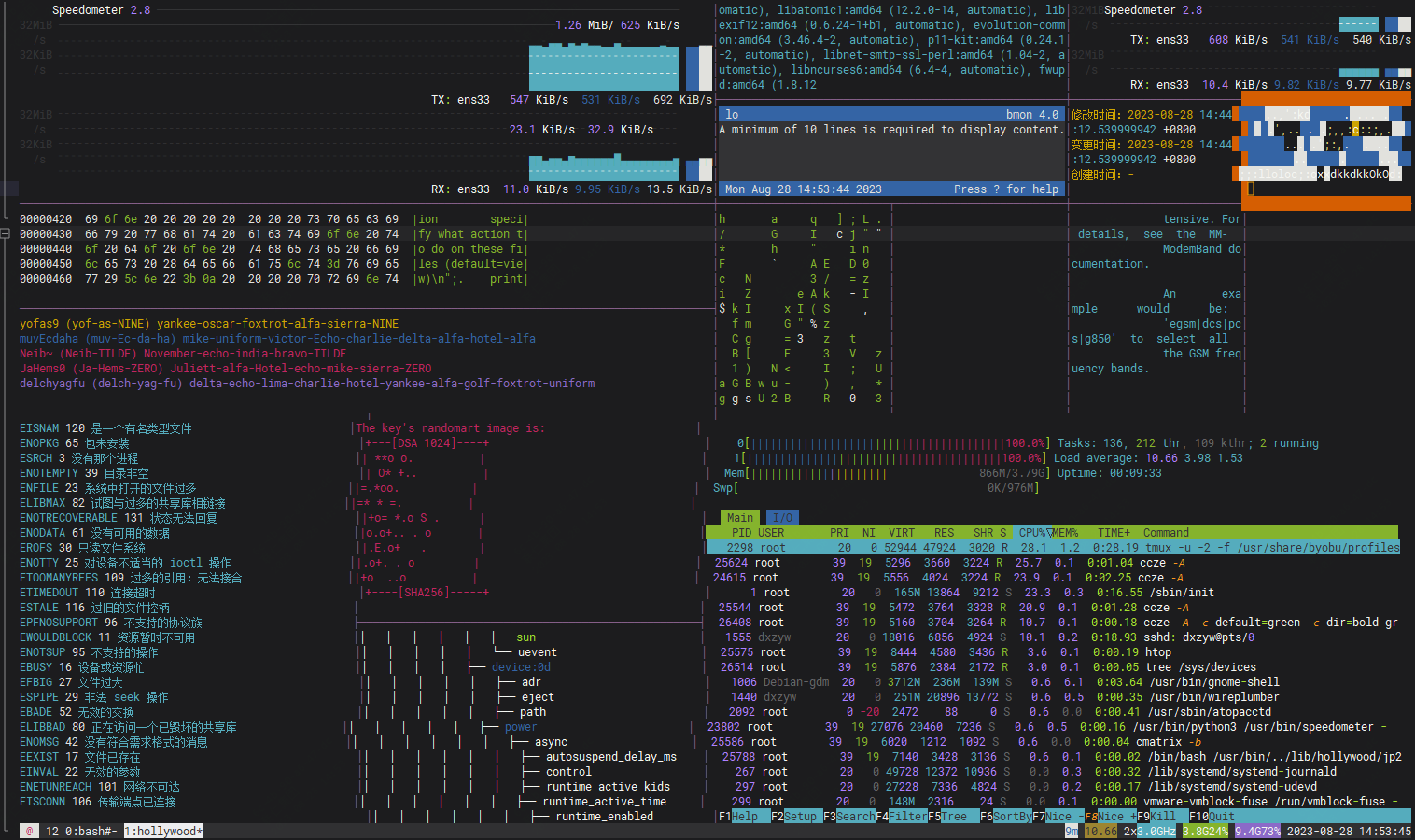

使用Hey对vllm进行模型并发压测

docker run --rm --network=knowledge_network \registry.cn-shanghai.aliyuncs.com/zhph-server/hey:latest \-n 200 -c 200 -m POST -H "Content-Type: application/json" \-H "Authorization: xxx" \-d '{"model": "codechat","messages": [{"role": "system", "content": "You are a helpful assistant."},{"role": "user", "content": "Hello!"}],"stream": false,"max_tokens": 100,"temperature": 0.0}' http://vllm-openai:80/v1/chat/completions

docker run --rm --network=knowledge_network \registry.cn-shanghai.aliyuncs.com/zhph-server/hey:latest \-n 200 -c 200 -m POST -H "Content-Type: application/json" \-H "Authorization: xxx" \-d '{"model": "codebase","prompt": "# write a python code to print hello world","stream": false,"max_tokens": 100,"temperature": 0.5}' http://vllm-openai:80/v1/completions

结果

Summary: Total: 2.2220 secs Slowest: 1.3603 secs Fastest: 0.7641 secs Average: 1.0815 secs Requests/sec: 43.2034 Total data: 28992 bytes Size/request: 302 bytes Response time histogram: 0.764 [1] |■ 0.824 [5] |■■■■■■■ 0.883 [4] |■■■■■■ 0.943 [7] |■■■■■■■■■■ 1.003 [11] |■■■■■■■■■■■■■■■■ 1.062 [7] |■■■■■■■■■■ 1.122 [28] |■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■■ 1.181 [7] |■■■■■■■■■■ 1.241 [9] |■■■■■■■■■■■■■ 1.301 [9] |■■■■■■■■■■■■■ 1.360 [8] |■■■■■■■■■■■ Latency distribution: 10% in 0.9175 secs 25% in 0.9570 secs 50% in 1.0721 secs 75% in 1.2131 secs 90% in 1.2790 secs 95% in 1.3599 secs 0% in 0.0000 secs Details (average, fastest, slowest): DNS+dialup: 0.0036 secs, 0.7641 secs, 1.3603 secs DNS-lookup: 0.0013 secs, 0.0000 secs, 0.0075 secs req write: 0.0003 secs, 0.0000 secs, 0.0051 secs resp wait: 1.0774 secs, 0.7640 secs, 1.3533 secs resp read: 0.0001 secs, 0.0000 secs, 0.0002 secs Status code distribution: [200] 96 responses