【CanMV K230 AI视觉】 人脸识别

- 人脸识别

动态测试效果可以去下面网站自己看。)

B站视频链接:已做成合集

抖音链接:已做成合集

人脸识别

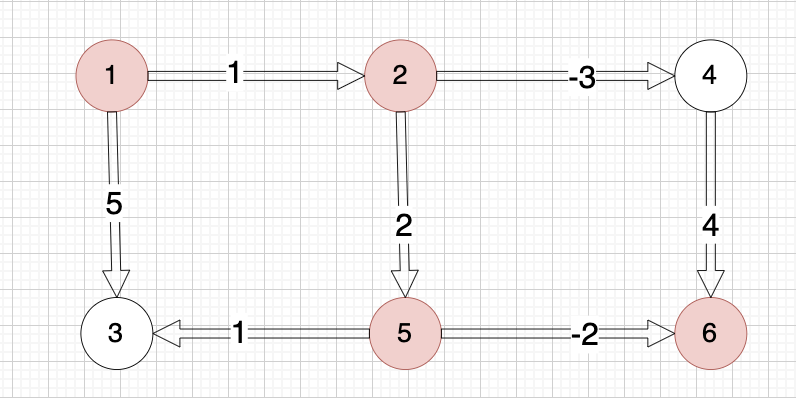

前面学习过的人脸检测,只检测出人脸,而本实验要做的人脸识别,会学习人脸特征,然后比对,实现区分不同人脸。比如常用的人脸考勤机就是这样的原理。

先运行人脸注册代码,人脸注册代码会将CanMV U盘/sdcard/app/tests/utils/db_img/目录下的人脸图片进行识别,可以看到里面预存了2张人脸图片,我呢放了3张。

'''

实验名称:人脸注册

实验平台:01Studio CanMV K230

教程:wiki.01studio.cc

'''from libs.PipeLine import PipeLine, ScopedTiming

from libs.AIBase import AIBase

from libs.AI2D import Ai2d

import os

import ujson

from media.media import *

from time import *

import nncase_runtime as nn

import ulab.numpy as np

import time

import image

import aidemo

import random

import gc

import sys

import math# 自定义人脸检测任务类

class FaceDetApp(AIBase):def __init__(self,kmodel_path,model_input_size,anchors,confidence_threshold=0.25,nms_threshold=0.3,rgb888p_size=[1280,720],display_size=[1920,1080],debug_mode=0):super().__init__(kmodel_path,model_input_size,rgb888p_size,debug_mode)# kmodel路径self.kmodel_path=kmodel_path# 检测模型输入分辨率self.model_input_size=model_input_size# 置信度阈值self.confidence_threshold=confidence_threshold# nms阈值self.nms_threshold=nms_thresholdself.anchors=anchors# sensor给到AI的图像分辨率,宽16字节对齐self.rgb888p_size=[ALIGN_UP(rgb888p_size[0],16),rgb888p_size[1]]# 视频输出VO分辨率,宽16字节对齐self.display_size=[ALIGN_UP(display_size[0],16),display_size[1]]# debug模式self.debug_mode=debug_mode# 实例化Ai2d,用于实现模型预处理self.ai2d=Ai2d(debug_mode)# 设置Ai2d的输入输出格式和类型self.ai2d.set_ai2d_dtype(nn.ai2d_format.NCHW_FMT,nn.ai2d_format.NCHW_FMT,np.uint8, np.uint8)self.image_size=[]# 配置预处理操作,这里使用了pad和resize,Ai2d支持crop/shift/pad/resize/affine,具体代码请打开/sdcard/app/libs/AI2D.py查看def config_preprocess(self,input_image_size=None):with ScopedTiming("set preprocess config",self.debug_mode > 0):# 初始化ai2d预处理配置,默认为sensor给到AI的尺寸,可以通过设置input_image_size自行修改输入尺寸ai2d_input_size=input_image_size if input_image_size else self.rgb888p_sizeself.image_size=[input_image_size[1],input_image_size[0]]# 计算padding参数,并设置padding预处理self.ai2d.pad(self.get_pad_param(ai2d_input_size), 0, [104,117,123])# 设置resize预处理self.ai2d.resize(nn.interp_method.tf_bilinear, nn.interp_mode.half_pixel)# 构建预处理流程,参数为预处理输入tensor的shape和预处理输出的tensor的shapeself.ai2d.build([1,3,ai2d_input_size[1],ai2d_input_size[0]],[1,3,self.model_input_size[1],self.model_input_size[0]])# 自定义后处理,results是模型输出的array列表,这里使用了aidemo库的face_det_post_process接口def postprocess(self,results):with ScopedTiming("postprocess",self.debug_mode > 0):res = aidemo.face_det_post_process(self.confidence_threshold,self.nms_threshold,self.model_input_size[0],self.anchors,self.image_size,results)if len(res)==0:return reselse:return res[0],res[1]def get_pad_param(self,image_input_size):dst_w = self.model_input_size[0]dst_h = self.model_input_size[1]# 计算最小的缩放比例,等比例缩放ratio_w = dst_w / image_input_size[0]ratio_h = dst_h / image_input_size[1]if ratio_w < ratio_h:ratio = ratio_welse:ratio = ratio_hnew_w = (int)(ratio * image_input_size[0])new_h = (int)(ratio * image_input_size[1])dw = (dst_w - new_w) / 2dh = (dst_h - new_h) / 2top = (int)(round(0))bottom = (int)(round(dh * 2 + 0.1))left = (int)(round(0))right = (int)(round(dw * 2 - 0.1))return [0,0,0,0,top, bottom, left, right]# 自定义人脸注册任务类

class FaceRegistrationApp(AIBase):def __init__(self,kmodel_path,model_input_size,rgb888p_size=[1920,1080],display_size=[1920,1080],debug_mode=0):super().__init__(kmodel_path,model_input_size,rgb888p_size,debug_mode)# kmodel路径self.kmodel_path=kmodel_path# 人脸注册模型输入分辨率self.model_input_size=model_input_size# sensor给到AI的图像分辨率,宽16字节对齐self.rgb888p_size=[ALIGN_UP(rgb888p_size[0],16),rgb888p_size[1]]# 视频输出VO分辨率,宽16字节对齐self.display_size=[ALIGN_UP(display_size[0],16),display_size[1]]# debug模式self.debug_mode=debug_mode# 标准5官self.umeyama_args_112 = [38.2946 , 51.6963 ,73.5318 , 51.5014 ,56.0252 , 71.7366 ,41.5493 , 92.3655 ,70.7299 , 92.2041]self.ai2d=Ai2d(debug_mode)self.ai2d.set_ai2d_dtype(nn.ai2d_format.NCHW_FMT,nn.ai2d_format.NCHW_FMT,np.uint8, np.uint8)# 配置预处理操作,这里使用了affine,Ai2d支持crop/shift/pad/resize/affine,具体代码请打开/sdcard/app/libs/AI2D.py查看def config_preprocess(self,landm,input_image_size=None):with ScopedTiming("set preprocess config",self.debug_mode > 0):ai2d_input_size=input_image_size if input_image_size else self.rgb888p_size# 计算affine矩阵,并设置仿射变换预处理affine_matrix = self.get_affine_matrix(landm)self.ai2d.affine(nn.interp_method.cv2_bilinear,0, 0, 127, 1,affine_matrix)# 构建预处理流程,参数为预处理输入tensor的shape和预处理输出的tensor的shapeself.ai2d.build([1,3,ai2d_input_size[1],ai2d_input_size[0]],[1,3,self.model_input_size[1],self.model_input_size[0]])# 自定义后处理def postprocess(self,results):with ScopedTiming("postprocess",self.debug_mode > 0):return results[0][0]def svd22(self,a):# svds = [0.0, 0.0]u = [0.0, 0.0, 0.0, 0.0]v = [0.0, 0.0, 0.0, 0.0]s[0] = (math.sqrt((a[0] - a[3]) ** 2 + (a[1] + a[2]) ** 2) + math.sqrt((a[0] + a[3]) ** 2 + (a[1] - a[2]) ** 2)) / 2s[1] = abs(s[0] - math.sqrt((a[0] - a[3]) ** 2 + (a[1] + a[2]) ** 2))v[2] = math.sin((math.atan2(2 * (a[0] * a[1] + a[2] * a[3]), a[0] ** 2 - a[1] ** 2 + a[2] ** 2 - a[3] ** 2)) / 2) if \s[0] > s[1] else 0v[0] = math.sqrt(1 - v[2] ** 2)v[1] = -v[2]v[3] = v[0]u[0] = -(a[0] * v[0] + a[1] * v[2]) / s[0] if s[0] != 0 else 1u[2] = -(a[2] * v[0] + a[3] * v[2]) / s[0] if s[0] != 0 else 0u[1] = (a[0] * v[1] + a[1] * v[3]) / s[1] if s[1] != 0 else -u[2]u[3] = (a[2] * v[1] + a[3] * v[3]) / s[1] if s[1] != 0 else u[0]v[0] = -v[0]v[2] = -v[2]return u, s, vdef image_umeyama_112(self,src):# 使用Umeyama算法计算仿射变换矩阵SRC_NUM = 5SRC_DIM = 2src_mean = [0.0, 0.0]dst_mean = [0.0, 0.0]for i in range(0,SRC_NUM * 2,2):src_mean[0] += src[i]src_mean[1] += src[i + 1]dst_mean[0] += self.umeyama_args_112[i]dst_mean[1] += self.umeyama_args_112[i + 1]src_mean[0] /= SRC_NUMsrc_mean[1] /= SRC_NUMdst_mean[0] /= SRC_NUMdst_mean[1] /= SRC_NUMsrc_demean = [[0.0, 0.0] for _ in range(SRC_NUM)]dst_demean = [[0.0, 0.0] for _ in range(SRC_NUM)]for i in range(SRC_NUM):src_demean[i][0] = src[2 * i] - src_mean[0]src_demean[i][1] = src[2 * i + 1] - src_mean[1]dst_demean[i][0] = self.umeyama_args_112[2 * i] - dst_mean[0]dst_demean[i][1] = self.umeyama_args_112[2 * i + 1] - dst_mean[1]A = [[0.0, 0.0], [0.0, 0.0]]for i in range(SRC_DIM):for k in range(SRC_DIM):for j in range(SRC_NUM):A[i][k] += dst_demean[j][i] * src_demean[j][k]A[i][k] /= SRC_NUMT = [[1, 0, 0], [0, 1, 0], [0, 0, 1]]U, S, V = self.svd22([A[0][0], A[0][1], A[1][0], A[1][1]])T[0][0] = U[0] * V[0] + U[1] * V[2]T[0][1] = U[0] * V[1] + U[1] * V[3]T[1][0] = U[2] * V[0] + U[3] * V[2]T[1][1] = U[2] * V[1] + U[3] * V[3]scale = 1.0src_demean_mean = [0.0, 0.0]src_demean_var = [0.0, 0.0]for i in range(SRC_NUM):src_demean_mean[0] += src_demean[i][0]src_demean_mean[1] += src_demean[i][1]src_demean_mean[0] /= SRC_NUMsrc_demean_mean[1] /= SRC_NUMfor i in range(SRC_NUM):src_demean_var[0] += (src_demean_mean[0] - src_demean[i][0]) * (src_demean_mean[0] - src_demean[i][0])src_demean_var[1] += (src_demean_mean[1] - src_demean[i][1]) * (src_demean_mean[1] - src_demean[i][1])src_demean_var[0] /= SRC_NUMsrc_demean_var[1] /= SRC_NUMscale = 1.0 / (src_demean_var[0] + src_demean_var[1]) * (S[0] + S[1])T[0][2] = dst_mean[0] - scale * (T[0][0] * src_mean[0] + T[0][1] * src_mean[1])T[1][2] = dst_mean[1] - scale * (T[1][0] * src_mean[0] + T[1][1] * src_mean[1])T[0][0] *= scaleT[0][1] *= scaleT[1][0] *= scaleT[1][1] *= scalereturn Tdef get_affine_matrix(self,sparse_points):# 获取affine变换矩阵with ScopedTiming("get_affine_matrix", self.debug_mode > 1):# 使用Umeyama算法计算仿射变换矩阵matrix_dst = self.image_umeyama_112(sparse_points)matrix_dst = [matrix_dst[0][0],matrix_dst[0][1],matrix_dst[0][2],matrix_dst[1][0],matrix_dst[1][1],matrix_dst[1][2]]return matrix_dst# 人脸注册任务类

class FaceRegistration:def __init__(self,face_det_kmodel,face_reg_kmodel,det_input_size,reg_input_size,database_dir,anchors,confidence_threshold=0.25,nms_threshold=0.3,rgb888p_size=[1280,720],display_size=[1920,1080],debug_mode=0):# 人脸检测模型路径self.face_det_kmodel=face_det_kmodel# 人脸注册模型路径self.face_reg_kmodel=face_reg_kmodel# 人脸检测模型输入分辨率self.det_input_size=det_input_size# 人脸注册模型输入分辨率self.reg_input_size=reg_input_sizeself.database_dir=database_dir# anchorsself.anchors=anchors# 置信度阈值self.confidence_threshold=confidence_threshold# nms阈值self.nms_threshold=nms_threshold# sensor给到AI的图像分辨率,宽16字节对齐self.rgb888p_size=[ALIGN_UP(rgb888p_size[0],16),rgb888p_size[1]]# 视频输出VO分辨率,宽16字节对齐self.display_size=[ALIGN_UP(display_size[0],16),display_size[1]]# debug_mode模式self.debug_mode=debug_modeself.face_det=FaceDetApp(self.face_det_kmodel,model_input_size=self.det_input_size,anchors=self.anchors,confidence_threshold=self.confidence_threshold,nms_threshold=self.nms_threshold,debug_mode=0)self.face_reg=FaceRegistrationApp(self.face_reg_kmodel,model_input_size=self.reg_input_size,rgb888p_size=self.rgb888p_size)# run函数def run(self,input_np,img_file):self.face_det.config_preprocess(input_image_size=[input_np.shape[3],input_np.shape[2]])det_boxes,landms=self.face_det.run(input_np)if det_boxes:if det_boxes.shape[0] == 1:# 若是只检测到一张人脸,则将该人脸注册到数据库db_i_name = img_file.split('.')[0]for landm in landms:self.face_reg.config_preprocess(landm,input_image_size=[input_np.shape[3],input_np.shape[2]])reg_result = self.face_reg.run(input_np)with open(self.database_dir+'{}.bin'.format(db_i_name), "wb") as file:file.write(reg_result.tobytes())print('Success!')else:print('Only one person in a picture when you sign up')else:print('No person detected')def image2rgb888array(self,img): #4维# 将Image转换为rgb888格式with ScopedTiming("fr_kpu_deinit",self.debug_mode > 0):img_data_rgb888=img.to_rgb888()# hwc,rgb888img_hwc=img_data_rgb888.to_numpy_ref()shape=img_hwc.shapeimg_tmp = img_hwc.reshape((shape[0] * shape[1], shape[2]))img_tmp_trans = img_tmp.transpose()img_res=img_tmp_trans.copy()# chw,rgb888img_return=img_res.reshape((1,shape[2],shape[0],shape[1]))return img_returnif __name__=="__main__":# 人脸检测模型路径face_det_kmodel_path="/sdcard/app/tests/kmodel/face_detection_320.kmodel"# 人脸注册模型路径face_reg_kmodel_path="/sdcard/app/tests/kmodel/face_recognition.kmodel"# 其它参数anchors_path="/sdcard/app/tests/utils/prior_data_320.bin"database_dir="/sdcard/app/tests/utils/db/"database_img_dir="/sdcard/app/tests/utils/db_img/"face_det_input_size=[320,320]face_reg_input_size=[112,112]confidence_threshold=0.5nms_threshold=0.2anchor_len=4200det_dim=4anchors = np.fromfile(anchors_path, dtype=np.float)anchors = anchors.reshape((anchor_len,det_dim))max_register_face = 100 #数据库最多人脸个数feature_num = 128 #人脸识别特征维度fr=FaceRegistration(face_det_kmodel_path,face_reg_kmodel_path,det_input_size=face_det_input_size,reg_input_size=face_reg_input_size,database_dir=database_dir,anchors=anchors,confidence_threshold=confidence_threshold,nms_threshold=nms_threshold)try:# 获取图像列表img_list = os.listdir(database_img_dir)for img_file in img_list:#本地读取一张图像full_img_file = database_img_dir + img_fileprint(full_img_file)img = image.Image(full_img_file)img.compress_for_ide()# 转rgb888的chw格式rgb888p_img_ndarry = fr.image2rgb888array(img)# 人脸注册fr.run(rgb888p_img_ndarry,img_file)gc.collect()except Exception as e:sys.print_exception(e)finally:fr.face_det.deinit()fr.face_reg.deinit()

运行后可以看到在/sdcard/app/tests/utils/db/目录下出现了5个人脸数据库:

当然自己也可以试试非人图片能不能注册

Only one person in a picture when you sign up

当你注册的时候,照片上只有一个人

注册后,运行下面代码可以进行识别

'''

实验名称:人脸识别

实验平台:01Studio CanMV K230

教程:wiki.01studio.cc

说明:先执行人脸注册代码,再运行本代码

'''from libs.PipeLine import PipeLine, ScopedTiming

from libs.AIBase import AIBase

from libs.AI2D import Ai2d

import os

import ujson

from media.media import *

from time import *

import nncase_runtime as nn

import ulab.numpy as np

import time

import image

import aidemo

import random

import gc

import sys

import math# 自定义人脸检测任务类

class FaceDetApp(AIBase):def __init__(self,kmodel_path,model_input_size,anchors,confidence_threshold=0.25,nms_threshold=0.3,rgb888p_size=[1920,1080],display_size=[1920,1080],debug_mode=0):super().__init__(kmodel_path,model_input_size,rgb888p_size,debug_mode)# kmodel路径self.kmodel_path=kmodel_path# 检测模型输入分辨率self.model_input_size=model_input_size# 置信度阈值self.confidence_threshold=confidence_threshold# nms阈值self.nms_threshold=nms_thresholdself.anchors=anchors# sensor给到AI的图像分辨率,宽16字节对齐self.rgb888p_size=[ALIGN_UP(rgb888p_size[0],16),rgb888p_size[1]]# 视频输出VO分辨率,宽16字节对齐self.display_size=[ALIGN_UP(display_size[0],16),display_size[1]]# debug模式self.debug_mode=debug_mode# 实例化Ai2d,用于实现模型预处理self.ai2d=Ai2d(debug_mode)# 设置Ai2d的输入输出格式和类型self.ai2d.set_ai2d_dtype(nn.ai2d_format.NCHW_FMT,nn.ai2d_format.NCHW_FMT,np.uint8, np.uint8)# 配置预处理操作,这里使用了pad和resize,Ai2d支持crop/shift/pad/resize/affine,具体代码请打开/sdcard/app/libs/AI2D.py查看def config_preprocess(self,input_image_size=None):with ScopedTiming("set preprocess config",self.debug_mode > 0):# 初始化ai2d预处理配置,默认为sensor给到AI的尺寸,可以通过设置input_image_size自行修改输入尺寸ai2d_input_size=input_image_size if input_image_size else self.rgb888p_size# 计算padding参数,并设置padding预处理self.ai2d.pad(self.get_pad_param(), 0, [104,117,123])# 设置resize预处理self.ai2d.resize(nn.interp_method.tf_bilinear, nn.interp_mode.half_pixel)# 构建预处理流程,参数为预处理输入tensor的shape和预处理输出的tensor的shapeself.ai2d.build([1,3,ai2d_input_size[1],ai2d_input_size[0]],[1,3,self.model_input_size[1],self.model_input_size[0]])# 自定义后处理,results是模型输出的array列表,这里使用了aidemo库的face_det_post_process接口def postprocess(self,results):with ScopedTiming("postprocess",self.debug_mode > 0):res = aidemo.face_det_post_process(self.confidence_threshold,self.nms_threshold,self.model_input_size[0],self.anchors,self.rgb888p_size,results)if len(res)==0:return res,reselse:return res[0],res[1]def get_pad_param(self):dst_w = self.model_input_size[0]dst_h = self.model_input_size[1]# 计算最小的缩放比例,等比例缩放ratio_w = dst_w / self.rgb888p_size[0]ratio_h = dst_h / self.rgb888p_size[1]if ratio_w < ratio_h:ratio = ratio_welse:ratio = ratio_hnew_w = (int)(ratio * self.rgb888p_size[0])new_h = (int)(ratio * self.rgb888p_size[1])dw = (dst_w - new_w) / 2dh = (dst_h - new_h) / 2top = (int)(round(0))bottom = (int)(round(dh * 2 + 0.1))left = (int)(round(0))right = (int)(round(dw * 2 - 0.1))return [0,0,0,0,top, bottom, left, right]# 自定义人脸注册任务类

class FaceRegistrationApp(AIBase):def __init__(self,kmodel_path,model_input_size,rgb888p_size=[1920,1080],display_size=[1920,1080],debug_mode=0):super().__init__(kmodel_path,model_input_size,rgb888p_size,debug_mode)# kmodel路径self.kmodel_path=kmodel_path# 检测模型输入分辨率self.model_input_size=model_input_size# sensor给到AI的图像分辨率,宽16字节对齐self.rgb888p_size=[ALIGN_UP(rgb888p_size[0],16),rgb888p_size[1]]# 视频输出VO分辨率,宽16字节对齐self.display_size=[ALIGN_UP(display_size[0],16),display_size[1]]# debug模式self.debug_mode=debug_mode# 标准5官self.umeyama_args_112 = [38.2946 , 51.6963 ,73.5318 , 51.5014 ,56.0252 , 71.7366 ,41.5493 , 92.3655 ,70.7299 , 92.2041]self.ai2d=Ai2d(debug_mode)self.ai2d.set_ai2d_dtype(nn.ai2d_format.NCHW_FMT,nn.ai2d_format.NCHW_FMT,np.uint8, np.uint8)# 配置预处理操作,这里使用了affine,Ai2d支持crop/shift/pad/resize/affine,具体代码请打开/sdcard/app/libs/AI2D.py查看def config_preprocess(self,landm,input_image_size=None):with ScopedTiming("set preprocess config",self.debug_mode > 0):ai2d_input_size=input_image_size if input_image_size else self.rgb888p_size# 计算affine矩阵,并设置仿射变换预处理affine_matrix = self.get_affine_matrix(landm)self.ai2d.affine(nn.interp_method.cv2_bilinear,0, 0, 127, 1,affine_matrix)# 构建预处理流程,参数为预处理输入tensor的shape和预处理输出的tensor的shapeself.ai2d.build([1,3,ai2d_input_size[1],ai2d_input_size[0]],[1,3,self.model_input_size[1],self.model_input_size[0]])# 自定义后处理def postprocess(self,results):with ScopedTiming("postprocess",self.debug_mode > 0):return results[0][0]def svd22(self,a):# svds = [0.0, 0.0]u = [0.0, 0.0, 0.0, 0.0]v = [0.0, 0.0, 0.0, 0.0]s[0] = (math.sqrt((a[0] - a[3]) ** 2 + (a[1] + a[2]) ** 2) + math.sqrt((a[0] + a[3]) ** 2 + (a[1] - a[2]) ** 2)) / 2s[1] = abs(s[0] - math.sqrt((a[0] - a[3]) ** 2 + (a[1] + a[2]) ** 2))v[2] = math.sin((math.atan2(2 * (a[0] * a[1] + a[2] * a[3]), a[0] ** 2 - a[1] ** 2 + a[2] ** 2 - a[3] ** 2)) / 2) if \s[0] > s[1] else 0v[0] = math.sqrt(1 - v[2] ** 2)v[1] = -v[2]v[3] = v[0]u[0] = -(a[0] * v[0] + a[1] * v[2]) / s[0] if s[0] != 0 else 1u[2] = -(a[2] * v[0] + a[3] * v[2]) / s[0] if s[0] != 0 else 0u[1] = (a[0] * v[1] + a[1] * v[3]) / s[1] if s[1] != 0 else -u[2]u[3] = (a[2] * v[1] + a[3] * v[3]) / s[1] if s[1] != 0 else u[0]v[0] = -v[0]v[2] = -v[2]return u, s, vdef image_umeyama_112(self,src):# 使用Umeyama算法计算仿射变换矩阵SRC_NUM = 5SRC_DIM = 2src_mean = [0.0, 0.0]dst_mean = [0.0, 0.0]for i in range(0,SRC_NUM * 2,2):src_mean[0] += src[i]src_mean[1] += src[i + 1]dst_mean[0] += self.umeyama_args_112[i]dst_mean[1] += self.umeyama_args_112[i + 1]src_mean[0] /= SRC_NUMsrc_mean[1] /= SRC_NUMdst_mean[0] /= SRC_NUMdst_mean[1] /= SRC_NUMsrc_demean = [[0.0, 0.0] for _ in range(SRC_NUM)]dst_demean = [[0.0, 0.0] for _ in range(SRC_NUM)]for i in range(SRC_NUM):src_demean[i][0] = src[2 * i] - src_mean[0]src_demean[i][1] = src[2 * i + 1] - src_mean[1]dst_demean[i][0] = self.umeyama_args_112[2 * i] - dst_mean[0]dst_demean[i][1] = self.umeyama_args_112[2 * i + 1] - dst_mean[1]A = [[0.0, 0.0], [0.0, 0.0]]for i in range(SRC_DIM):for k in range(SRC_DIM):for j in range(SRC_NUM):A[i][k] += dst_demean[j][i] * src_demean[j][k]A[i][k] /= SRC_NUMT = [[1, 0, 0], [0, 1, 0], [0, 0, 1]]U, S, V = self.svd22([A[0][0], A[0][1], A[1][0], A[1][1]])T[0][0] = U[0] * V[0] + U[1] * V[2]T[0][1] = U[0] * V[1] + U[1] * V[3]T[1][0] = U[2] * V[0] + U[3] * V[2]T[1][1] = U[2] * V[1] + U[3] * V[3]scale = 1.0src_demean_mean = [0.0, 0.0]src_demean_var = [0.0, 0.0]for i in range(SRC_NUM):src_demean_mean[0] += src_demean[i][0]src_demean_mean[1] += src_demean[i][1]src_demean_mean[0] /= SRC_NUMsrc_demean_mean[1] /= SRC_NUMfor i in range(SRC_NUM):src_demean_var[0] += (src_demean_mean[0] - src_demean[i][0]) * (src_demean_mean[0] - src_demean[i][0])src_demean_var[1] += (src_demean_mean[1] - src_demean[i][1]) * (src_demean_mean[1] - src_demean[i][1])src_demean_var[0] /= SRC_NUMsrc_demean_var[1] /= SRC_NUMscale = 1.0 / (src_demean_var[0] + src_demean_var[1]) * (S[0] + S[1])T[0][2] = dst_mean[0] - scale * (T[0][0] * src_mean[0] + T[0][1] * src_mean[1])T[1][2] = dst_mean[1] - scale * (T[1][0] * src_mean[0] + T[1][1] * src_mean[1])T[0][0] *= scaleT[0][1] *= scaleT[1][0] *= scaleT[1][1] *= scalereturn Tdef get_affine_matrix(self,sparse_points):# 获取affine变换矩阵with ScopedTiming("get_affine_matrix", self.debug_mode > 1):# 使用Umeyama算法计算仿射变换矩阵matrix_dst = self.image_umeyama_112(sparse_points)matrix_dst = [matrix_dst[0][0],matrix_dst[0][1],matrix_dst[0][2],matrix_dst[1][0],matrix_dst[1][1],matrix_dst[1][2]]return matrix_dst# 人脸识别任务类

class FaceRecognition:def __init__(self,face_det_kmodel,face_reg_kmodel,det_input_size,reg_input_size,database_dir,anchors,confidence_threshold=0.25,nms_threshold=0.3,face_recognition_threshold=0.75,rgb888p_size=[1280,720],display_size=[1920,1080],debug_mode=0):# 人脸检测模型路径self.face_det_kmodel=face_det_kmodel# 人脸识别模型路径self.face_reg_kmodel=face_reg_kmodel# 人脸检测模型输入分辨率self.det_input_size=det_input_size# 人脸识别模型输入分辨率self.reg_input_size=reg_input_sizeself.database_dir=database_dir# anchorsself.anchors=anchors# 置信度阈值self.confidence_threshold=confidence_threshold# nms阈值self.nms_threshold=nms_thresholdself.face_recognition_threshold=face_recognition_threshold# sensor给到AI的图像分辨率,宽16字节对齐self.rgb888p_size=[ALIGN_UP(rgb888p_size[0],16),rgb888p_size[1]]# 视频输出VO分辨率,宽16字节对齐self.display_size=[ALIGN_UP(display_size[0],16),display_size[1]]# debug_mode模式self.debug_mode=debug_modeself.max_register_face = 100 # 数据库最多人脸个数self.feature_num = 128 # 人脸识别特征维度self.valid_register_face = 0 # 已注册人脸数self.db_name= []self.db_data= []self.face_det=FaceDetApp(self.face_det_kmodel,model_input_size=self.det_input_size,anchors=self.anchors,confidence_threshold=self.confidence_threshold,nms_threshold=self.nms_threshold,rgb888p_size=self.rgb888p_size,display_size=self.display_size,debug_mode=0)self.face_reg=FaceRegistrationApp(self.face_reg_kmodel,model_input_size=self.reg_input_size,rgb888p_size=self.rgb888p_size,display_size=self.display_size)self.face_det.config_preprocess()# 人脸数据库初始化self.database_init()# run函数def run(self,input_np):# 执行人脸检测det_boxes,landms=self.face_det.run(input_np)recg_res = []for landm in landms:# 针对每个人脸五官点,推理得到人脸特征,并计算特征在数据库中相似度self.face_reg.config_preprocess(landm)feature=self.face_reg.run(input_np)res = self.database_search(feature)recg_res.append(res)return det_boxes,recg_resdef database_init(self):# 数据初始化,构建数据库人名列表和数据库特征列表with ScopedTiming("database_init", self.debug_mode > 1):db_file_list = os.listdir(self.database_dir)for db_file in db_file_list:if not db_file.endswith('.bin'):continueif self.valid_register_face >= self.max_register_face:breakvalid_index = self.valid_register_facefull_db_file = self.database_dir + db_filewith open(full_db_file, 'rb') as f:data = f.read()feature = np.frombuffer(data, dtype=np.float)self.db_data.append(feature)name = db_file.split('.')[0]self.db_name.append(name)self.valid_register_face += 1def database_reset(self):# 数据库清空with ScopedTiming("database_reset", self.debug_mode > 1):print("database clearing...")self.db_name = []self.db_data = []self.valid_register_face = 0print("database clear Done!")def database_search(self,feature):# 数据库查询with ScopedTiming("database_search", self.debug_mode > 1):v_id = -1v_score_max = 0.0# 将当前人脸特征归一化feature /= np.linalg.norm(feature)# 遍历当前人脸数据库,统计最高得分for i in range(self.valid_register_face):db_feature = self.db_data[i]db_feature /= np.linalg.norm(db_feature)# 计算数据库特征与当前人脸特征相似度v_score = np.dot(feature, db_feature)/2 + 0.5if v_score > v_score_max:v_score_max = v_scorev_id = iif v_id == -1:# 数据库中无人脸return 'unknown'elif v_score_max < self.face_recognition_threshold:# 小于人脸识别阈值,未识别return 'unknown'else:# 识别成功result = 'name: {}, score:{}'.format(self.db_name[v_id],v_score_max)return result# 绘制识别结果def draw_result(self,pl,dets,recg_results):pl.osd_img.clear()if dets:for i,det in enumerate(dets):# (1)画人脸框x1, y1, w, h = map(lambda x: int(round(x, 0)), det[:4])x1 = x1 * self.display_size[0]//self.rgb888p_size[0]y1 = y1 * self.display_size[1]//self.rgb888p_size[1]w = w * self.display_size[0]//self.rgb888p_size[0]h = h * self.display_size[1]//self.rgb888p_size[1]pl.osd_img.draw_rectangle(x1,y1, w, h, color=(255,0, 0, 255), thickness = 4)# (2)写人脸识别结果recg_text = recg_results[i]pl.osd_img.draw_string_advanced(x1,y1,32,recg_text,color=(255, 255, 0, 0))if __name__=="__main__":# 注意:执行人脸识别任务之前,需要先执行人脸注册任务进行人脸身份注册生成feature数据库# 显示模式,默认"hdmi",可以选择"hdmi"和"lcd"display_mode="lcd"if display_mode=="hdmi":display_size=[1920,1080]else:display_size=[800,480]# 人脸检测模型路径face_det_kmodel_path="/sdcard/app/tests/kmodel/face_detection_320.kmodel"# 人脸识别模型路径face_reg_kmodel_path="/sdcard/app/tests/kmodel/face_recognition.kmodel"# 其它参数anchors_path="/sdcard/app/tests/utils/prior_data_320.bin"database_dir ="/sdcard/app/tests/utils/db/"rgb888p_size=[1920,1080]face_det_input_size=[320,320]face_reg_input_size=[112,112]confidence_threshold=0.5nms_threshold=0.2anchor_len=4200det_dim=4anchors = np.fromfile(anchors_path, dtype=np.float)anchors = anchors.reshape((anchor_len,det_dim))face_recognition_threshold = 0.75 # 人脸识别阈值# 初始化PipeLine,只关注传给AI的图像分辨率,显示的分辨率pl=PipeLine(rgb888p_size=rgb888p_size,display_size=display_size,display_mode=display_mode)pl.create()fr=FaceRecognition(face_det_kmodel_path,face_reg_kmodel_path,det_input_size=face_det_input_size,reg_input_size=face_reg_input_size,database_dir=database_dir,anchors=anchors,confidence_threshold=confidence_threshold,nms_threshold=nms_threshold,face_recognition_threshold=face_recognition_threshold,rgb888p_size=rgb888p_size,display_size=display_size)clock = time.clock()try:while True:os.exitpoint()clock.tick()img=pl.get_frame() # 获取当前帧det_boxes,recg_res=fr.run(img) # 推理当前帧print(det_boxes,recg_res) # 打印结果fr.draw_result(pl,det_boxes,recg_res) # 绘制推理结果pl.show_image() # 展示推理效果gc.collect()print(clock.fps()) #打印帧率except Exception as e:sys.print_exception(e)finally:fr.face_det.deinit()fr.face_reg.deinit()pl.destroy()

| 主代码变量 | 说明 |

|---|---|

| det_boxes | 人脸检测结果 |

| recg_res | 人脸识别结果 |